PractiTest announced a new MCP (Model Context Protocol) capability that connects AI Models like ChatGPT and Claude directly to PractiTest’s project data. Teams can use real context to generate tests from requirements, suggest edge cases, analyze coverage gaps, and then create and link approved outputs back into PractiTest. “AI is only as reliable as the

The Role of Artificial Intelligence in Reducing Dispensing Errors for | RMHP

Introduction

Medication errors, including prescribing, dispensing, administration, and monitoring-related failures, represent a significant concern in healthcare because of their potential to cause harm to patients.1 In the UK alone, such errors contribute to approximately £98 million annual losses and an estimated 1708 deaths.2 As these errors can occur at any stage of the medication use process and often involve multiple healthcare professionals, ensuring patient safety remains a complex and multidimensional challenge. Defined as preventable events that may lead to inappropriate medication use or patient harm, medication errors highlight the urgent need for robust systems, standardized practices, and technological innovations to minimize risks and enhance medication safety.3,4

Among the various types of medication errors, dispensing errors are particularly critical as they directly affect the accuracy and safety of medication administration to patients. A recent study5 defined dispensing errors as any discrepancy between the medication prescribed and the medication supplied to the patient by a pharmacy or a healthcare provider. These errors can stem from multiple causes, including environmental factors such as high workload, staff shortages, and distractions; product design factors such as similar packaging, comparable strengths, and unclear labeling; and human- or team-related factors such as communication lapses, fatigue, inexperience, and failure to conduct necessary checks.6,7 The reported rates of dispensing errors in hospital settings vary widely across countries,8 with consequences ranging from mild side effects to severe outcomes, including permanent harm or death.

Therefore, efforts to improve the reliability and safety of medication dispensing have focused on enhancing accuracy, consistency, and accountability across the dispensing process.9 Interventions include policy reforms, workflow redesigns, and adoption of technologies such as electronic prescriptions (e-prescribing) and barcoding systems.10 These advancements have demonstrated measurable benefits, yet dispensing errors remains a persistent challenge, highlighting the need for more intelligent, adaptive, and data-driven solutions.

Artificial Intelligence (AI) has emerged as a promising approach to address medication-dispensing errors in healthcare systems.11,12 By leveraging data-driven algorithms, such as machine learning (ML) and natural language processing (NLP), AI systems can analyze vast datasets to identify potential discrepancies in real time, often more efficiently and consistently than traditional manual methods. Traditional safeguards, such as double-checking, barcode scanning, and pharmacist verification, remain essential, but can be limited by human fatigue, workload pressures, and procedural variability.13–15 AI-driven systems have been shown in selected studies to augment these safeguards by cross-referencing prescriptions with patient records, flagging inconsistencies, and generating alerts for potential risk, particularly in high-volume or data-rich environments.16,17 Moreover, robotic dispensing systems powered by AI have demonstrated the ability to automate routine dispensing tasks, further reducing the likelihood of human error.18–20 In practice, such robotic dispensing systems have been deployed in hospital and large community pharmacy settings to support high-volume, repetitive tasks such as medication selection, packaging, and labeling. Studies from these environments report reductions in wrong-drug and wrong-dose errors, more consistent application of safety checks, and improvements in workflow efficiency, particularly during peak workload periods. Beyond direct error reduction, robotic systems can free pharmacists and pharmacechnicians to focus on clinical verification and counseling, thereby shifting effort from manual handling to higher-value safety activities. Collectively, the integration of AI into dispensing workflows represents a proactive strategy for improving medication safety, reducing errors, and enhancing patient outcomes.21

Despite these advancements, a notable research gap remains in the understanding of how AI technologies interact with a broader healthcare ecosystem. Although AI offers significant potential to mitigate dispensing errors, few studies have examined these systems through a systems thinking lens, which is essential for understanding interdependencies within complex healthcare environments.22 Systems thinking enables researchers and practitioners to explore how technologies influence workflow dynamics, stakeholder roles, regulatory processes, and organizational constraints. It also facilitates the identification of unintended consequences and feedback loops that may arise from technological intervention. Robotic dispensing systems are one visible example of how AI can reshape roles, workflows, and infrastructure within dispensing; however, their effects depend on how they are integrated with people, processes, and governance structures. Rather than focusing on the technology in isolation, our perspective uses these AI and robotic applications as concrete cases to examine how different system components must align to deliver sustainable improvements in dispensing safety.

In healthcare, systems thinking provides a holistic framework for improving safety and performance by emphasizing the interactions and dependencies among components, rather than viewing them in isolation. In the context of AI-based medication dispensing, this perspective helps identify points where AI integration may enhance or disrupt existing practices. By incorporating these insights, health care systems can design solutions that are more resilient, adaptive, and sustainable.23

Accordingly, this study aimed to examine the role of AI in reducing dispensing medication errors through a systems thinking approach. By integrating insights across the dimensions of people, system, design, and risk, this study sought to identify the key opportunities, challenges, and pathways for implementing AI technologies to improve patient safety and healthcare quality.23

Methods

In this perspective, we adopted a systems approach23 to analyze the potential of AI-driven solutions for mitigating medication-dispensing errors in clinical settings. Systems thinking refers to a holistic analytical framework that examines how people, technologies, workflows, organizational structures, and risks interact dynamically within the medication-use process. Rather than evaluating AI tools in isolation, a systems thinking approach emphasizes interdependencies, feedback loops, and unintended consequences that may emerge when AI is integrated into real-world healthcare environments. This perspective draws on established sociotechnical systems theory, recognizing that improvements in medication safety require alignment between human factors, digital infrastructure, governance, and clinical workflows. The methodological process involved two primary stages: first, a focused review of existing AI-driven approaches in the literature and second, an analytical assessment of these approaches through the lens of the systems approach framework. Although the number of empirical studies directly addressing AI in dispensing safety is limited, this review serves as a conceptual foundation for informing and contextualizing system-based analyses.

The focused review aimed to identify representative and influential studies on AI applications relevant to medication dispensing errors, rather than to exhaustively capture all available evidence. The review was therefore conducted to support conceptual synthesis and systems-based analysis, consistent with the perspective nature of this paper.

Literature searches were conducted in major bibliographic databases commonly used for healthcare and engineering research. Searches covered publications to capture both early foundational work and recent advances in AI-enabled dispensing support. Studies were considered for inclusion if they (i) addressed medication dispensing or closely related pharmacy processes, (ii) described or evaluated an AI-based method or algorithm, and (iii) reported relevance to error detection, prevention, or risk mitigation. Titles and abstracts were screened by the authors to assess relevance, followed by full-text review of eligible articles. Given the conceptual aim of the paper, no formal quality scoring or meta-analysis was performed. Instead, included studies were purposively selected to represent diverse AI techniques, error mechanisms, and application contexts, and were subsequently analysed using the systems approach framework across people, system, design, and risk perspectives.

The systems approach applied in this study draws on the framework proposed by Clarkson et al,23 which has been used to guide healthcare design and improvement. The systems approach comprises four fundamental and interrelated perspectives: people, systems, design, and risk.

People perspective emphasize understanding the roles, needs, and interactions of all stakeholders involved in care delivery, including patients, clinicians, pharmacists, and administrators. The systems perspective focuses on the complex interdependencies among healthcare processes, technologies, and organizational structures that shape the medication management and dispensing workflow. The design perspective concerns the creation of user-centered, practical, and context-sensitive solutions that can be effectively integrated into existing healthcare environments. The risk perspective highlights the early identification, assessment, and mitigation of potential failures or unintended consequences of the development and implementation of technological interventions.

By employing these four perspectives, this study provides a structured framework for examining how AI can be effectively designed, implemented, and governed to enhance the safety and reliability of medication dispensing processes.

Results

Earlier studies collectively demonstrated the potential of AI in improving medication dispensing, highlighting the need for greater dataset diversity, tighter system integration, and real-time processing. As these capabilities mature, they are setting new benchmarks for accuracy, safety, and efficiency in clinical workflows. Table 1 summarizes the reviewed studies by AI method, application area, limitations, and future directions, and indicates that reported gains in error detection, classification accuracy, and workflow efficiency are heterogeneous across evaluation contexts.

|

Table 1 Summary of AI Applications in Dispensing Error Management |

Evidence Organized by Error Mechanism

Name- and Terminology-Related Errors

NLP has been deployed to parse and analyze hospital safety reports, illustrating how AI can accelerate learning from free-text narratives.24 A key gap is the limited integration of advanced medical ontologies, especially systematized nomenclature of medicine-clinical terms (Systematized Nomenclature of Medicine-Clinical Terms (SNOMED CT)), which enables consistent coding and semantic interoperability across systems.31,32 Enriching NLP with such ontologies can deepen the interpretation of clinical language and reduce errors in medication labeling and descriptions.

At the same time, Fong et al noted practical hurdles: processing large volumes of text, inaccuracies in manual categorization, and the labor required for human review.24 Algorithmic support for auto-categorization and trend detection can reduce review time and trigger earlier interventions.

Complementing NLP, Tseng et al used statistical methods with logistic regression to detect misnaming before it reached patients, showing that a systematic analysis of dispensing data could predict and mitigate naming discrepancies.25 Challenges include incorporating diverse variables, achieving reliability in real-world conditions, and addressing data quality. Opportunities include feedback-driven model refinement, hybrid (fusion) algorithms, and expert-guided improvements.25 In the context of dispensing, improved interpretation of medication names and terminology directly supports accurate labeling and reduces the risk of misidentification before medications reach patients.

Packaging/Appearance-Related Errors (Look-Alike, Sound-Alike)

Deep learning image classification has been applied to identify and sort medications according to their visual attributes (shape, color, packaging), directly targeting misidentification risks.29 Because medication selection and final product verification are central dispensing tasks, image-based identification tools are particularly well suited to preventing look-alike and sound-alike errors at the point of dispensing. A real-time Blister Package Recognition approach similarly automates recognition using induced deep learning to mimic human perception, trained on large blister-image datasets under standardized lighting, thereby achieving high recognition accuracy.30 Operational challenges remain, such as the limited diversity of training data, fine-grained differentiation among highly similar packages, and meeting real-time constraints in pharmacy settings.30 The authors emphasize maintaining human oversight to avoid over-reliance on automation and to preserve pharmacist accountability.30

Workflow/Resource-Related Errors

Ant Colony Optimization (ACO) models inventory flows to optimize stocking and dispensing, thereby reducing the risk of outdated or incorrect medications being issued.26 While balancing staff allocation and checking strategies within budget constraints is complex, ACO-based systems can enhance patient safety, lower error rates, and improve operational efficiency through simulation-guided decision-making. By optimizing inventory availability and reducing process variability, these approaches indirectly support safer dispensing by minimizing time pressure and reliance on workarounds.26

Classification and Interaction Screening

A two-stage deep learning (DL) architecture, where deep learning refers to multi-layer neural network models capable of identifying complex patterns in large datasets (You and Lin), first learns from large drug datasets and then refines the classification for complex interactions and contraindications, improving downstream dispensing accuracy.27 Persistent issues include limited explainability and regulatory considerations, including medications with boxed warnings (terminology may vary by country);33 accountability when AI contributes to an error;34 limited generalizability from narrow training data;35 and evolving regulatory standards for development, validation, and deployment.36,37 Even so, careful integration of DL classifiers can enhance patient safety, streamline workflow, and reduce costs.33–37 Although medication classification and interaction screening are often associated with prescribing, these functions are also highly relevant to dispensing. Identification of contraindications and high-risk drug combinations enables enhanced verification, targeted labeling, and pharmacist review prior to medication release, positioning DL-based classification as a dispensing safeguard rather than a prescribing intervention. When integrated into dispensing workflows, DL-based classifiers can support targeted verification and pharmacist review for medications with higher interaction or contraindication risk, thereby improving dispensing accuracy without removing human oversight.

Risk-Stratified Dispensing (High-Risk Medications)

Bayesian Neural Networks (BNNs) incorporate uncertainty estimates to evaluate multiple risk factors in parallel, enabling the adaptive prediction and prevention of high-risk medications.28 This proactive approach supports earlier hazard detection but raises human factor considerations around transparency and professional roles. Here, human–AI teaming, pairing computational precision with clinical judgment, offers a pragmatic path to safer decisions.28,33,34 Within dispensing workflows, risk stratification supports prioritization of verification steps for high-risk medications, enabling pharmacists to allocate attention where it is most needed.

A Systems Approach for AI-Based NLP Application

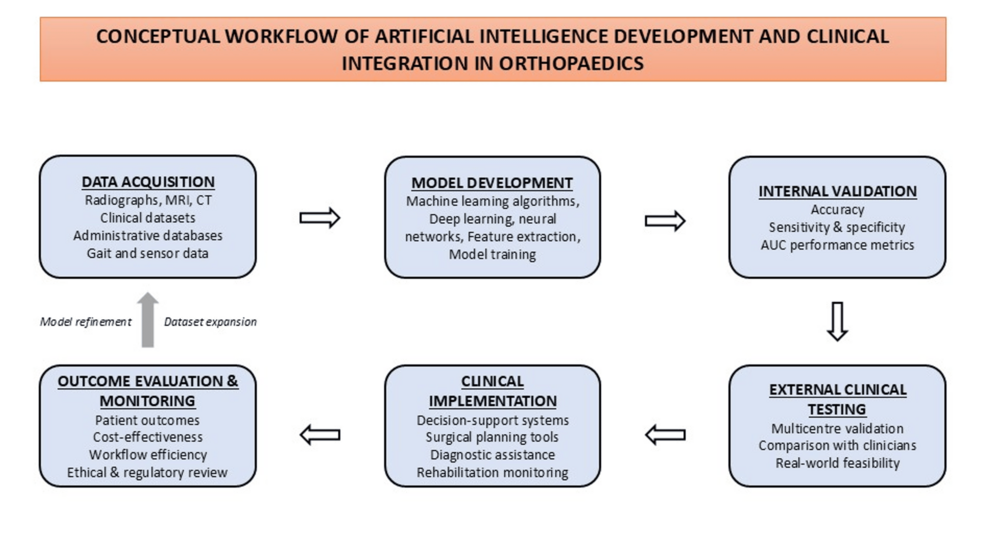

Among the surveyed approaches, AI-based NLP systems appeared most frequently. Figure 1 illustrates the conceptual technical flow.

|

Figure 1 System flow of the AI-based NLP system for reducing dispensing error in the healthcare sector. |

Through a secure application, clinicians, patients, and pharmacies can submit the prescription and dispensing data. These systems typically operate through application programming interfaces (APIs), which enable automated data exchange between electronic health records, pharmacy dispensing systems, and the AI engine without requiring manual data entry by pharmacy staff. Medication-related data are automatically extracted from electronic health records and pharmacy information systems via existing interfaces; no manual data entry is required. NLP and machine learning models analyze structured and unstructured data to identify potential dispensing risks, such as labeling discrepancies, look-alike/sound-alike medications, or high-risk drug characteristics. In the event of a discrepancy, the system provides decision-support alerts or risk flags to pharmacists and pharmacy technicians within existing dispensing workflows. The AI does not recommend or select medications; final dispensing decisions remain under human supervision. Depending on institutional infrastructure, the AI application may be embedded within existing pharmacy software or accessed through a secure web-based interface; it does not function as a standalone mobile application. We analyzed the NLP concept using the Engineering Better Care framework across four perspectives: people, system, design, and risk, guided by the questions shown in Figure 2.

|

Figure 2 System-thinking framework of an AI-based NLP system to reduce medication dispensing errors. |

This framework involves critical questions regarding user engagement, system integration, ethical design, and safety assurance.

People

Identify–Locate–Situate

Primary users of these systems are pharmacists and pharmacy technicians involved in dispensing and verification. Patients are not direct users of the technology but are beneficiaries of improved safety outcomes. Oversight and governance are provided by healthcare organizations and regulatory bodies, with data use governed by institutional policies rather than individual patient interaction. The system is deployed across hospitals, clinics, and pharmacies and integrates e-prescriptions and EHRs. Performance depends on data quality, team collaboration, and compliance with privacy/security regulations. Targeted training and human-AI collaboration improve safe use.

System

Understand–Organize–Integrate

Stakeholders included pharmacy staff, patients, clinicians, health-system leadership, and technology vendors. The core elements include NLP algorithms, data integration/processing pipelines, user interfaces with dashboards, and feedback mechanisms for iterative improvement, all of which are critical for clinician situational awareness.38 Performance evaluation should encompass accuracy, calibration, sensitivity/specificity, efficiency, scalability, robustness to language/data variations, adaptability via learning loops, and user experience. External validation across sites protects against overfitting and confirms generalizability, while ethical/legal compliance protects patient rights and trust.

Risk

Exercise–Assess–Improve

As noted in Figure 1, the data flows revealed three end-user touchpoints (pharmacies, patients, and clinicians) and EHR integration. Potential failure modes include NLP misinterpretation, security/privacy breaches, reduced transparency/accountability, and over-automation. Mitigations include periodic model audits and updates, encryption and access controls, adoption of explainable-AI techniques, and clear governance for ethical use. A system lens aligns the technical, human, and organizational safeguards for robust deployment. Importantly, the AI-enabled processes described do not introduce an additional step prior to or during dispensing. Instead, they automate background checks that would otherwise rely on manual review or memory, thereby reducing cognitive load, minimizing workflow interruptions, and lowering the likelihood of dispensing errors.

Design

Explore–Create–Evaluate

Needs include high precision in interpreting prescriptions and interactions, efficient analysis as data volumes grow, and trustworthy human-AI collaboration. Meeting these needs requires fit-for- ML, computer vision, NLP, human-centered interfaces that align with existing workflows, and transparency features that counter automation bias and support oversight. Continuous user feedback (eg, surveys and focus groups) should guide iterative refinement. As noted by Adetemi et al, high-quality data, bias mitigation, and transparency are prerequisites for an equitable AI deployment.39

Discussion and Integration

Across techniques such as NLP with clinical ontologies, statistical modeling, computer vision, optimization, deep learning classifiers, and Bayesian neural networks, AI shows consistent promise for improving the safety, timeliness, and efficiency of dispensing processes. These approaches address distinct but complementary failure modes within the dispensing pathway: NLP and ontologies reduce terminology- and documentation-related errors; computer vision addresses look-alike/packaging risks; statistical and deep learning models support classification and interaction screening; optimization methods improve inventory reliability; and probabilistic models enable calibrated risk stratification for high-risk medications. Taken together, this complementarity suggests that composite multi-modal toolchains may offer greater protection than any single technique alone.

Importantly, the potential value of such composite approaches extends beyond error reduction. When appropriately integrated, AI-enabled systems can stabilize workflows, reduce manual rework, and provide earlier signals of deteriorating safety conditions (eg, sudden spikes in specific error types), thereby supporting organizational learning and resource stewardship. In this sense, AI contributes not only to safer dispensing outcomes, but also to more resilient and adaptive medication-use systems. Realizing these benefits, however, depends on careful socio-technical integration. For readers less familiar with health informatics and AI, several terms warrant clarification. NLP refers to computational techniques that enable systems to interpret and analyze free-text clinical language. Machine learning and deep learning describe data-driven methods that identify patterns in large datasets, with deep learning using multi-layer neural networks to capture more complex relationships. In this context, decision support denotes tools that assist clinicians by highlighting risks or inconsistencies, while final clinical decisions remain under human control. Realizing these gains, however, depends on careful socio-technical design. Human–AI teaming is therefore central: decision support should augment (not replace) clinical judgment, provide calibrated confidence estimates, and make uncertainty visible to end-users. Appropriately designed guardrails such as escalation, prompt ambiguous cases, tiered alerting, and “explain-why” interfaces can promote appropriate reliance and mitigate automation bias. An important practical consideration is the substantial heterogeneity of EHR data and electronic prescribing systems across healthcare settings. At the same time, implementation is shaped by the substantial heterogeneity of healthcare information infrastructures. Variations in data standards, user interfaces, workflow configurations, and interoperability capabilities can significantly influence how AI-enabled tools are integrated into dispensing processes. Systems developed and validated within a single institutional context may not translate seamlessly to other environments without adaptation. Consequently, successful deployment requires flexible architectures, standardized data models, and close collaboration with local clinical and informatics teams to ensure alignment with existing workflows.

From a systems perspective, sustainable integration requires coordinated alignment across people, system, design, and risk. People-centric adoption (training, role clarity, feedback loops) reduces resistance and supports trust; system-aware deployment (interoperability, throughput fit, error-recovery pathways) preserves workflow integrity; design choices (human-centered interfaces, transparency features, cognitive load minimization) support safe use under time pressure; and proactive risk management (hazard analysis,40 model monitoring,41 incident learning42) helps prevent the emergence of new error modes prior to scale-up. These dimensions are interdependent; for example, transparency features (design) can shape appropriate reliance (people), whereas monitoring model drift (risk) depends on robust telemetry and data plumbing (system).

Although progress is notable, important scope and implementation gaps persist, particularly around data representativeness, external validity, real-time performance under operational constraints, and post-deployment governance.43 Figure 3 depicts these gaps and articulates the research and implementation agenda for addressing them.

|

Figure 3 Gaps and future research agenda for dispensing errors in hospitals and artificial intelligence. |

Addressing these gaps requires coordinated advances across multiple domains. With respect to data breadth and multimodality, models should jointly leverage structured data (eg, medication lists and demographics) and unstructured data (eg, clinical notes, images, and free-text prescriptions) to strengthen real-time detection and contextual understanding, thereby curating diverse training corpora across sites, populations, formularies, and packaging variants and benchmarking external validity across institutions.38,44 In parallel, transparency, ethics, and governance must be embedded throughout the model lifecycle: explainability, auditability, and systematic bias assessment should accompany data protection, access control, and incident-response mechanisms, with clearly delineated accountability for AI-supported decisions.37,39,45

In pursuit of rigorous evaluation and monitoring, prospective designs (eg, stepped-wedge or hybrid effectiveness-implementation studies) should include pre-specified safety and performance endpoints, eg, calibration, sensitivity/specificity, alert burden, and equity metrics by subgroup, while continuous model-drift surveillance, version control, and rollback procedures maintain performance over time.

From a human factors and workflow integration standpoint, applying human-centered design can minimize cognitive load, surface uncertainty, and support appropriate reliance; simulation, usability testing, and participatory co-design with pharmacists, technicians, and prescribers should be paired with training that covers model capabilities and limitations, plus escalation pathways for ambiguous cases.

To ensure interoperability and operational resilience, systems should be engineered for robust connections with e-prescribing, EHR, and dispensing platforms, with defined fail-safe modes, latency budgets, and error-recovery protocols that preserve safe operations during outages or degraded performance, and with technical service-level objectives aligned with clinical safety goals.

To promote equity and generalizability, performance should be assessed across formulations, languages, packaging variations, and diverse patient populations with targeted data augmentation and recalibration applied, where disparities are detected to improve fairness without compromising safety.

Regarding implementation governance and value realization, pre-deployment evaluation should address technical, operational, and ethical feasibility using a system-based lens to ensure context-appropriate adoption, followed by post-implementation review cycles that integrate safety incidents, user feedback, and cost data to iteratively refine models and workflows.37,39,45

Collectively, these directions aim to translate promising technical advances into reliable, transparent, and equitable improvements in dispensing safety. By coupling multi-modal AI methods with disciplined sociotechnical integration, health systems can strengthen medication safety while maintaining professional accountability and patient trust. At the same time, the current evidence base remains preliminary. Many studies are retrospective, simulation-based, or conducted in single-institution settings, with limited prospective evaluation under real-world operational constraints. Quantitative outcomes vary widely by context, data quality, and implementation maturity; therefore, reported effectiveness should be interpreted as indicative rather than definitive.

Consistent with this evidence base, the value of composite multimodal AI toolchains lies not only in reducing dispensing errors, but also in delivering broader system-level benefits. NLP-based analyses support faster learning from unstructured safety data; image-based recognition and classification reduce manual verification and rework; optimization approaches stabilize medication availability; and risk-stratification models improve prioritization by directing attention to high-risk cases.

Crucially, these benefits depend on clearly defined roles for healthcare professionals. Pharmacists and pharmacy technicians retain responsibility for contextual interpretation of AI outputs, validation of alerts, and final dispensing decisions. Clinicians and pharmacy staff supervise AI-supported processes through escalation, override, and documentation for accountability and learning. Healthcare professionals also contribute to governance through feedback, usability testing, co-design, and integration of AI-related incidents into organizational learning systems. Thus, AI functions as a background decision-support and prioritization tool, while healthcare professionals remain central to safety assurance and system management.

The novelty of this work therefore lies not in reporting new empirical effect sizes, but in offering a systems-level synthesis of AI-enabled approaches to dispensing safety. Unlike studies that examine individual techniques in isolation, this perspective integrates diverse methods within a unified people–system–design–risk framework, revealing cross-cutting dependencies and implementation considerations that are not apparent from single-method or single-site evaluations. Accordingly, this paper distinguishes between evidence-backed findings, conceptual propositions derived from systems thinking, and forward-looking recommendations for research and practice.

Finally, governance, ethical oversight, and regulatory compliance are best understood as emergent properties of sociotechnical systems. Accountability, auditability, data governance, explainability, and mitigation of automation bias arise from coordinated interactions among people, workflows, organizational policies, and digital infrastructure—not from technical controls alone. This systems framing is particularly critical in medication dispensing, where responsibility is distributed across professionals, institutions, and technologies, and where failures often reflect misalignment between system components rather than algorithmic performance in isolation.

Conclusion

This perspective argues that reducing dispensing medication errors with AI, exemplified by NLP-enabled decision support, requires more than algorithmic accuracy. It demands a socio-technical systems approach that integrates people, system, design, and risk. From a people perspective, sustained engagement with pharmacists, technicians, prescribers, patients, and administrators through co-design, training, and implementation fosters trust, appropriate reliance, and accountability. At the system level, interoperable data pipelines, rigorous privacy and security safeguards, and continuous post-deployment monitoring (including incident learning) are essential for maintaining the reliability of real-world workflows. Design considerations must prioritize human-centered interfaces, transparency of model rationale and uncertainty, and fit-for-workflow integration to minimize cognitive load and alert fatigue. A proactive risk perspective spanning ethical review, bias assessment, hazard analysis, and well-defined escalation and fallback modes mitigates new error modes before scaling-up.

At the same time, intrinsic limitations of AI systems mean that they cannot be assumed to eradicate dispensing errors. Models may hallucinate, become miscalibrated, or behave unpredictably under data shifts in ways that are difficult for humans to detect. These challenges underscore the need for AI to be positioned as a fallible decision-support tool embedded within a learning system, rather than as an infallible replacement for human judgment.

Future research should therefore focus on systematically characterising and mitigating these AI-specific risks. Priority areas include: developing and validating methods to detect and manage hallucinations and other silent failure modes (for example, through robust uncertainty quantification, calibration, and anomaly detection); designing prospective and pragmatic evaluations that incorporate safety, reliability, and equity endpoints; and implementing continuous model monitoring, drift detection, and rollback mechanisms that can be triggered when performance degrades.

Human–AI teaming also warrants dedicated study, including how best to present explanations and uncertainty to support appropriate reliance, how to integrate incident reports involving AI into organisational learning, and how to share accountability between clinicians and AI-supported systems. Finally, governance and regulatory frameworks must evolve to clarify responsibilities for AI-assisted decisions, specify expectations for documentation and reporting, and ensure that safety improvements do not come at the expense of transparency or equity.

Collectively, these dimensions provide a practical roadmap for translating promising AI capabilities into measurable safety gains for dispensing. While the current evidence base is growing, it remains heterogeneous; our contribution is a structured synthesis and a forward-looking agenda that aligns technical innovation with operational realities and governance requirements. Future progress will depend on multimodal, representative datasets; external validation across diverse settings and formularies; pragmatic and hybrid effectiveness–implementation evaluations; and robust oversight mechanisms that preserve professional judgment and patient trust.

If health systems pair state-of-the-art AI methods with sociotechnical integration and continuous learning, AI can help deliver safer, more reliable, and more equitable medication dispensing without compromising the clinician–patient relationship.

Acknowledgement

This research was funded by Khalifa University of Science and Technology through the Research & Innovation Grant Program under Project ID: KU-INT-RIG-2025-8471000044.

Disclosure

The authors report no conflicts of interest in this work.

References

1. Ferner RE, Aronson JK. Clarification of terminology in medication errors: definitions and classification. Drug Saf. 2006;29(11):1011–12. doi:10.2165/00002018-200629110-00001

2. Elliott RA, Camacho E, Jankovic D, Sculpher MJ, Faria R. Economic analysis of the prevalence and clinical and economic burden of medication error in England. BMJ Qual Saf. 2020;30(2):96–105. doi:10.1136/bmjqs-2019-010206

3. World Health Organization. Medication without harm: WHO global patient safety challenge. Geneva: World Health Organization. 2017. Available from: https://www.who.int/publications/i/item/WHO-HIS-SDS-2017.6.

4. Donaldson LJ, Kelley ET, Dhingra-Kumar N, Kieny MP, Sheikh A. Medication without harm: WHO’s third global patient safety challenge. Lancet. 2017;389(10080):1680–1681. doi:10.1016/S0140-6736(17)31047-4

5. Cheung KC, Bouvy ML, De Smet PAGM. Medication errors: the importance of safe dispensing. Br J Clin Pharmacol. 2009;67(6):676–680. doi:10.1111/j.1365-2125.2009.03428.x

6. Gao Y, Guo Y, Zheng M, et al. A refined management system focusing on medication dispensing errors: a 14-year retrospective study of a hospital outpatient pharmacy. Saudi Pharm J. 2023;31(12):101845. doi:10.1016/j.jsps.2023.101845

7. Aldhwaihi K, Schifano F, Pezzolesi C, Umaru N.A systematic review of the nature of dispensing errors in hospital pharmacies. Integr Pharm Res Pract.2016;5:1–10. doi:10.2147/IPRP.S95733

8. Ibrahim OM, Ibrahim RM, Al MAZ, Al MN. Dispensing errors in community pharmacies in the United Arab Emirates: investigating incidence, types, severity, and causes. Pharm Pract. 2020;18(4):1.

9. Molstad S, Rehnberg C, Sawe H. Introducing unit-dose dispensing in a university hospital: effects on the distribution of medication errors. Risk Manag Healthc Policy. 2014;7:49–56. doi:10.2147/RMHP.S49754

10. Cooke CE, Isetts BJ, Sullivan TE, Fustgaard M, Belletti DA. Potential value of electronic prescribing in health economic and outcomes research. Patient Relat Outcome Meas. 2010;1:163–178. doi:10.2147/PROM.S13033

11. Ye J. Patient safety of perioperative medication through the lens of digital health and artificial intelligence. JMIR Perioper Med. 2023;6:e34453. doi:10.2196/34453

12. Baurasien BK, Alshehri F, Almalki A, et al. Medical errors and patient safety: strategies for reducing errors using artificial intelligence. Int J Health Sci. 2023;7(S1):3471–3487. doi:10.53730/ijhs.v7nS1.15143

13. Fracica PJ, Fracica EA. Patient Safety. In: Medical Quality Management: Theory and Practice. Springer; 2021:53–90.

14. Heikkinen I. Barcode medication administration and patient safety: a narrative literature review. 2022.

15. Cardozo T. Pharmacy manager system implementation strategies to mitigate the cost of prescription errors [PhD dissertation]. Walden University; 2023.

16. Tantray J, Patel A, Wani SN, Kosey S, Prajapati BG. Prescription precision: a comprehensive review of intelligent prescription systems. Curr Pharm Des. 2024;30(34):2671–2684. doi:10.2174/0113816128321623240719104337

17. Ye J. Patient safety of perioperative medication through the lens of digital health and artificial intelligence. JMIR Perioper Med. 2023;6:e34453.

18. Nunavath RS, Nagappan K. Future of pharmaceutical industry: role of artificial intelligence, automation and robotics. J Pharmacol Pharmacother. 2024;15(2):1.

19. Stasevych M, Zvarych V. Innovative robotic technologies and artificial intelligence in pharmacy and medicine: paving the way for the future of health care—A review. Big Data Cogn Comput. 2023;7(3):147. doi:10.3390/bdcc7030147

20. Khan O, Parvez M, Kumari P, Parvez S, Ahmad S. The future of pharmacy: how AI is revolutionizing the industry. Intell Pharm. 2023;1(1):32–40.

21. Belagodu Sridhar S, Karattuthodi MS, Parakkal SA. Role of artificial intelligence in clinical and hospital pharmacy. In: Bhupathyraaj M, Vijayarani KR, Dhanasekaran M, Essa MM editors. Application of Artificial Intelligence in Neurological Disorders. Nutritional Neurosciences. Springer;2024. doi:10.1007/978-981-97-2577-9_12

22. Carayon P, Schoofs Hundt A, Karsh BT, et al. Work system design for patient safety: the SEIPS model. Qual Saf Health Care. 2006;15(Suppl 1):i50–i58. doi:10.1136/qshc.2005.015842

23. Clarkson PJ, Bogle D, Dean JD, et al. Engineering better care: a systems approach to health and care design and continuous improvement. Royal Academy of Engineering; 2017. Available from: https://www.eng.cam.ac.uk/uploads/news/files/engineering-better-care-report-web-3mb-20170922.pdf.

24. Fong A, Harriott N, Walters DM, Foley H, Morrissey R, Ratwani RR. Integrating natural language processing expertise with patient safety event review committees to improve the analysis of medication events. Int J Med Inform. 2017;104:120–125. doi:10.1016/j.ijmedinf.2017.05.005

25. Tseng VS, Chen CH, Chen HM, Chang HJ, Yu CT. Analysis and prevention of dispension errors by using data mining techniques. In:

26. Naybour M, Remenyte-Prescott R, Boyd M. Ant colony optimisation of a community pharmacy dispensing process using coloured petri-net simulation and UK pharmacy in-field data. Proc Inst Mech Eng O J Risk Reliab. 2024;238(1):29–43. doi:10.1177/1748006×221135459.

27. You YS, Lin YS. A novel two-stage induced deep learning system for classifying similar drugs with diverse packaging. Sensors. 2023;23(16):7275. doi:10.3390/s23167275

28. Zheng Y, Rowell B, Chen Q, et al. Designing human-centered AI to prevent medication dispensing errors: focus group study with pharmacists. JMIR Form Res. 2023;7:e51921. doi: 10.2196/51921.

29. Chen MC, Wang MS, Chang WJ, Cheng TS. IDispensing: a deep learning-based dispensing medicine recognition system. In:

30. Han Y, Chung SL, Xiao Q, Wang JS, Su SF. Pharmaceutical blister package identification based on induced deep learning. IEEE Access. 2021;9:101344–101356. doi:10.1109/access.2021.3097181

31. Gaudet-Blavignac C, Foufi V, Bjelogrlic M, Lovis C. Use of the systematized nomenclature of medicine clinical terms (SNOMED CT) for processing free text in health care: systematic scoping review. J Med Internet Res. 2021;23(1):e24594. doi:10.2196/24594

32. Shahpori R, Doig C. Systematized nomenclature of medicine–clinical terms direction and its implications on critical care. J Crit Care. 2010;25(2):364.e1–364.e9. doi:10.1016/j.jcrc.2009.08.008

33. Guidotti R, Monreale A, Ruggieri S, Turini F, Giannotti F, Pedreschi D. A survey of methods for explaining black box models. ACM Comput Surv. 2018;51(5):93. doi:10.1145/3236009

34. Amann J, Blasimme A, Vayena E, et al. Explainability for artificial intelligence in healthcare: a multidisciplinary perspective. BMC Med Inform Decis Mak. 2020;20(1):310. doi:10.1186/s12911-020-01332-6

35. Kaushal A, Altman R, Langlotz C. Geographic distribution of US cohorts used to train deep learning algorithms. JAMA. 2020;324(12):1212–1213. doi:10.1001/jama.2020.12067

36. Topol EJ. High-performance medicine: the convergence of human and artificial intelligence. Nat Med. 2019;25(1):44–56. doi:10.1136/bmjqs-2019-010206

37. Esmaeilzadeh P. Challenges and strategies for wide-scale artificial intelligence (AI) deployment in healthcare practices: a perspective for healthcare organizations. Artif Intell Med. 2024;151:102861. doi:10.1016/j.artmed.2024.102861

38. Gupta NS, Kumar P. Perspective of artificial intelligence in healthcare data management: a journey towards precision medicine. Comput Biol Med. 2023;162:107051. doi:10.1016/j.compbiomed.2023.107051

39. Adeyemi C, Adegoke BO, Odugbose T. The impact of healthcare information technology on reducing medication errors: a review of recent advances. Int J Front Med Surg Res. 2024;5(2):20–29. (). doi:10.53294/ijfmsr.2024.5.2.0034

40. Simsekler MCE, Card AJ, Ruggeri K, Ward JR, Clarkson PJ. A comparison of the methods used to support risk identification for patient safety in one UK NHS foundation trust. Clin Risk. 2015;21(2–3):37–46. doi:10.1177/1356262215580224

41. Card AJ, Simsekler MCE, Clark M, Ward JR, Clarkson PJ. Use of the generating options for active risk control (GO-ARC) technique can lead to more robust risk control options. Int J Risk Saf Med. 2014;26(4):199–211. doi:10.3233/JRS-140636

42. Albreiki S, Simsekler MCE, Qazi A, Bouabid A. Assessment of the organizational factors in incident management practices in healthcare: a tree augmented Naive Bayes model. PLoS One. 2024;19(3):e0299485. (). doi:10.1371/journal.pone.0299485

43. Toffaha KM, Simsekler MCE, Omar MA. Leveraging artificial intelligence and decision support systems in hospital-acquired pressure injuries prediction: a comprehensive review. Artif Intell Med. 2023;141:102560. doi:10.1016/j.artmed.2023.102560

44. Zhang D, Yin C, Zeng J, et al. Combining structured and unstructured data for predictive models: a deep learning approach. BMC Med Inform Decis Mak. 2020;20(1):280. doi:10.1186/s12911-020-01297-6

45. CIEHF. Human factors and ergonomics in healthcare AI. Available from: https://www.ergonomics.org.uk/Public/Resources/Publications/Human-Factors-and-Ergonomics-in-Healthcare-AI.aspx.