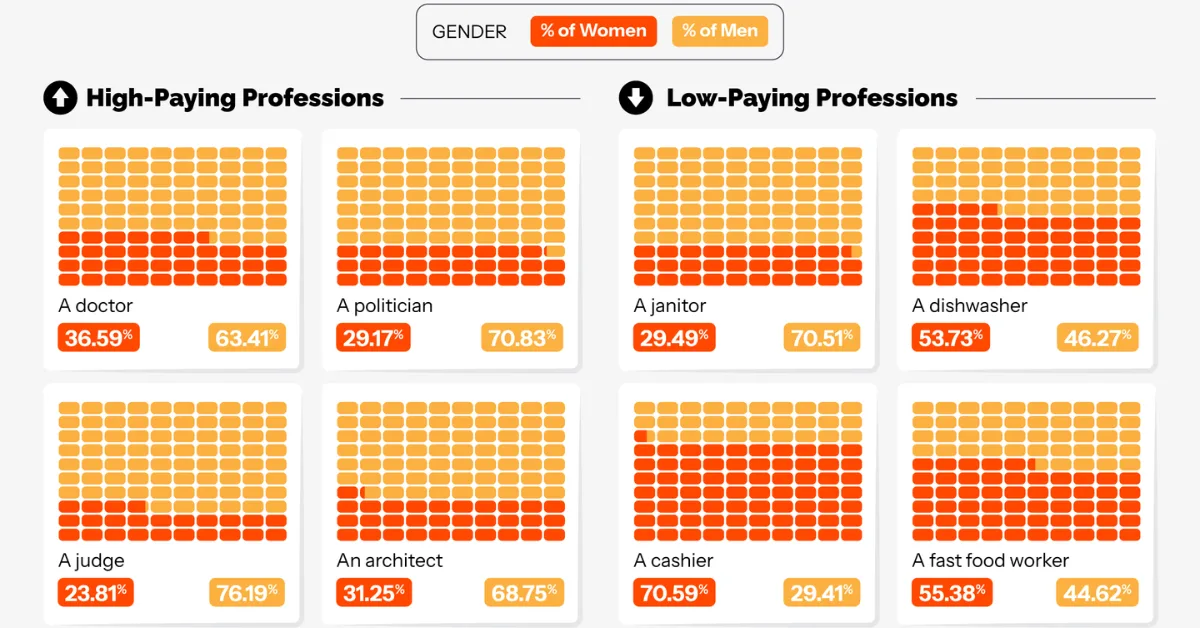

Male Doctors, Female Cashiers: AI’s Job Bias This was originally posted on our Voronoi app. Download the app for free on iOS or Android and discover incredible data-driven charts from a variety of trusted sources. Key Takeaways AI-generated videos depict roughly 70%+ of high-paying roles, such as CEOs, software engineers, and financial analysts, as male.

Artificial Neural Networks: The Unsung Hero Behind the Current AI Technologies

📝 Summary

Artificial neural networks, a family of ideas developed over 80 years, are the unsung hero behind current AI technologies such as ChatGPT, image generators, and voice assistants. These networks learn patterns from data and have been the core engine behind many AI systems, despite being largely invisible to the public.

When people say “AI” today, many of them are thinking about ChatGPT, image generators, voice assistants, translation tools, coding copilots, or systems that can summarise documents in seconds. These tools feel new, powerful and sometimes mysterious. Yet the main engine inside many of them is not a sudden invention from 2022. It is a family of ideas that has been growing, failing, returning and improving for more than 80 years: the artificial neural network.

This is why neural networks deserve to be called the unsung heroes of the current artificial intelligence (AI) revolution. The public sees the chatbot. The public sees the generated image. The public sees the smart search result. But behind the screen, the modern AI system usually depends on layers of artificial “neurons” learning patterns from data.

Before ChatGPT, “AI” meant many things

It is essential to remember that AI did not always refer to neural-network-based systems. Before the ChatGPT phenomenon at the end of 2022, AI was a broad umbrella. It included expert systems that used human-written rules, symbolic programming in languages such as LISP, fuzzy logic, image processing and recognition, search and planning, robotics, and optimisation techniques inspired by nature, such as genetic algorithms, ant colony optimisation, particle swarm optimisation and many others.

All of these belonged to the larger story of AI: the attempt to make machines perform tasks that normally require human intelligence. But after ChatGPT became a global sensation, the popular meaning of AI changed. For many people, “AI” now means a system that can learn from huge amounts of data, understand language, generate text, create images, write code and respond naturally. In practice, that usually points to deep learning, and deep learning is built on deep neural networks.

So, to understand today’s AI, we need to understand neural networks.

What is a neural network, in plain language?

A neural network is a computer model inspired loosely by the way brains process information. It is not a digital brain, and it does not copy biology perfectly. Instead, it borrows a simple idea: many small processing units can be connected, and the strength of those connections can be adjusted through learning.

Imagine a student learning to recognise cats. At first, the student may guess based on simple features: ears, eyes, whiskers, and tail. After seeing many examples, the student becomes better at noticing patterns. A neural network does something similar, but mathematically. It receives inputs, passes signals through connected units called artificial neurons, and produces an output such as “cat”, “not cat”, “next word”, “spam”, “safe transaction” or “tumour likely present”.

The important part is learning. A neural network is not usually programmed with every rule by hand. Instead, it is trained using examples. During training, the system compares its prediction with the correct answer and adjusts its internal connection strengths, called weights. Over time, these weights become the memory of what the network has learned.

1943: the first mathematical neuron

The modern story often begins in 1943, when Warren McCulloch and Walter Pitts published their work titled “A Logical Calculus of the Ideas Immanent in Nervous Activity.” Their work proposed a simplified mathematical model of a neuron. In their model, a neuron could receive inputs, apply a threshold and produce an output. This was a very early attempt to describe thinking-like activity using logic and computation.

The McCulloch-Pitts neuron did not learn in the modern sense. It did not automatically improve from the data. But it introduced a powerful idea: perhaps networks of simple artificial units could perform meaningful computation. That idea became one of the seeds of connectionism, the view that intelligence may emerge from many connected simple units rather than from rules written one by one.

The perceptron and the optimism of the 1950s and 1960s

The next important chapter came from Frank Rosenblatt. In the late 1950s, Rosenblatt introduced the perceptron, one of the first artificial neural networks that could learn from examples. The perceptron could adjust its weights to classify inputs. It was exciting because it seemed to show that a machine could learn patterns rather than only follow fixed instructions.

In 1958, reports about the perceptron created public excitement. The idea that a machine might learn to recognise patterns felt revolutionary. Rosenblatt’s work was an important step because it moved artificial neurons from static logical models toward learning systems.

However, the early perceptron had a serious limitation. A simple single-layer perceptron could only solve problems that were linearly separable. In everyday language, it could draw only a simple boundary between categories. It could not solve certain problems where the pattern required multiple steps of internal reasoning.

Why neural-network research slowed down

The perceptron problem

In 1969, Marvin Minsky and Seymour Papert published the book Perceptrons. They showed rigorously that single-layer perceptrons had important limitations, including their inability to solve the famous XOR problem. The XOR example may sound technical, but the lesson was simple: if a neural network has no useful hidden layers, it cannot learn many patterns that even small multi-step systems should handle.

This critique did not mean neural networks were useless. It meant that the simple versions of that era were not enough. Unfortunately, the field did not yet have a widely trusted and practical method for training multi-layer networks. Computers were also slow, datasets were small, and many researchers were more confident in symbolic AI systems built from explicit rules.

The wider AI Winter

The slowdown also happened inside a wider crisis of confidence in AI. In the United Kingdom, the 1973 Lighthill Report argued that AI had not delivered the broad impact that earlier optimism promised, and it criticised the difficulty of scaling AI methods beyond small demonstration problems.

In the United States, changing research policy and stricter expectations for defence-funded projects reduced support for open-ended AI research; the National Research Council later described how this shift affected government support for computing and AI research.

Historians now describe this period as part of the first AI Winter: a time when public promises, limited computing power, narrow datasets and difficult real-world problems created a gap between AI ambition and practical delivery.

Why the gap lasted

Neural-network research was caught in that cold climate. It was not completely dead, but it became unfashionable compared with symbolic AI and other approaches. The gap from the late 1960s to the early 1980s was therefore not caused by one book alone; it was caused by a combination of mathematical limitations, weak training methods, limited hardware, limited data and reduced confidence in AI funding.

The late 1980s revival: hidden layers learned to learn

The field warms up again

Neural networks returned strongly in the 1980s. Several developments helped. John Hopfield’s work connected neural networks with ideas from physics. Geoffrey Hinton and Terrence Sejnowski explored Boltzmann machines and distributed representations. Most famously, David Rumelhart, Geoffrey Hinton and Ronald Williams popularised backpropagation in their 1986 work on learning internal representations by back-propagating errors.

Why backpropagation mattered

Backpropagation was the practical novelty that made the revival powerful. It provided an efficient way to calculate how much each internal weight contributed to an error, then adjust the weights layer by layer. This addressed the “credit assignment” problem: when the output is wrong, which earlier connections should be blamed, and by how much?

This was different from the early perceptron era. Neural networks were no longer limited to a simple input-output layer. Hidden layers could now learn useful internal representations. Instead of humans manually designing every feature, the network could learn intermediate patterns by itself.

Why the revival still had limits

Still, neural networks of the late 1980s and 1990s could not go as far as today’s systems. The reasons were practical. Computers were not powerful enough, especially for large matrix calculations. Graphics processing units (GPUs) were not yet widely used for AI training. Datasets were much smaller. Algorithms also struggled with problems such as vanishing gradients, where learning signals become weak as they move backwards through many layers. Neural networks were useful, but they were not yet ready to dominate the AI world.

From neural networks to deep learning

More than just many layers

Deep learning is machine learning built around deep neural networks, but it is not only about stacking many hidden layers. Depth matters because extra layers allow a system to build representations step by step. However, modern deep learning also depends on several other ingredients: large datasets, powerful hardware, better optimisation methods, improved activation functions, regularisation techniques, and software frameworks that make large-scale training practical.

Classic versus deep neural networks

A classic neural network was often shallow: one input layer, perhaps one hidden layer, and one output layer. It could learn useful patterns, but it often depended heavily on features prepared by humans. A modern deep neural network usually has many layers, many more adjustable parameters, and the ability to learn features automatically from raw or lightly processed data. In other words, the system does not merely classify using hand-designed clues; it learns internal representations that become useful for the task.

Patterns within patterns

For example, in image recognition, early layers may detect edges, later layers may detect shapes, and deeper layers may detect objects. In language, early layers may capture word patterns, deeper layers may capture grammar, meaning, context and relationships between ideas. The exact internal process is complex, but the principle is intuitive: each layer transforms information into a more useful form for the next layer.

The motivation for deep neural networks was clear. Many real-world problems are not simple. Human language, vision, speech, medical images and scientific data contain patterns within patterns. Shallow models often cannot capture this rich structure. Deep networks offered a way to learn complex representations directly from data.

The 2012 acceleration

Deep learning began to accelerate when several conditions finally came together: large digital datasets, faster hardware, better training methods, improved activation functions, regularisation methods, and software frameworks that made large-scale training practical.

The next decade was therefore not just a period of bigger neural networks. It was a period when a new generation of builders turned these ingredients into systems that started to work in the real world.

The 2010s: the decade neural networks became useful to everyone

A turning point, not a starting line

The 2010s did not begin neural-network research. By then, the field already had artificial neurons, perceptrons, hidden layers, backpropagation and decades of belief from pioneers such as Geoffrey Hinton, Yoshua Bengio and Yann LeCun. What changed was that younger researchers and engineers now had the missing ingredients: large datasets, GPUs, better training methods and a growing culture of open code and open courses. They did not merely prove that neural networks could work in theory. They made them useful enough for other people to build on.

The ImageNet moment that changed the mood

The famous turning point came in 2012. Alex Krizhevsky, Ilya Sutskever and Geoffrey Hinton used a deep convolutional neural network called AlexNet to achieve a major breakthrough in the ImageNet image-recognition competition. The result mattered because ImageNet was not a tiny laboratory demonstration. It was a large, difficult benchmark with many categories of real images. Suddenly, neural networks were not just an old idea returning from history; they were outperforming older methods on a task the wider AI community cared about.

Builders removed the practical barriers

After ImageNet, the question changed from “Can neural networks work?” to “Where else can they work?” Sutskever, Oriol Vinyals and Quoc Le helped show that neural networks could map one sequence into another, a key step for machine translation and other language tasks.

Later, GPT-1 and GPT-2 showed how large neural language models trained on broad text data could become more general-purpose. Andrej Karpathy, working with Fei-Fei Li, helped connect vision and language by using neural networks to generate natural-language descriptions of images, while CS231n helped many learners enter the field through practical deep-learning material.

Ian Goodfellow and colleagues showed that neural networks could generate realistic data through GANs. Diederik Kingma and Jimmy Ba introduced Adam, an optimisation method that made training deep models easier in practice. Kaiming He and colleagues introduced residual networks, which helped very deep neural networks train successfully.

The knowledge spread beyond a few laboratories

The 2010s were also important because deep learning became teachable and buildable. Karpathy and Fei-Fei Li’s CS231n course at Stanford gave many students and engineers a practical route into convolutional neural networks. Open-source systems such as TensorFlow made deep-learning tools available beyond a small circle of specialists. This mattered socially as much as technically. A good idea becomes powerful only when many people can learn it, test it, improve it and apply it to problems outside the original laboratory.

Suddenly, neural networks were everywhere

By the end of the decade, neural networks had moved from research papers into ordinary digital life. They helped photo apps recognise faces and objects, translation tools handle language more naturally, voice assistants understand speech more accurately, recommendation systems suggest what to watch or buy, and image systems create or edit visual content. Most people did not know these improvements came from neural networks.

They simply noticed that their tools worked better. That quiet usefulness was the real sign that the field had changed.

Neural networks enter language

Beyond n-grams

Language modelling is the task of predicting language: what word, phrase or token is likely to come next, or how a piece of text should be represented. Before neural language models, many systems used statistical methods such as n-grams. These models counted short word sequences and estimated probabilities. They were useful, but they struggled with meaning, long-range context and words or phrases not seen before.

Distributed word representations

A major step came from Yoshua Bengio and colleagues, who proposed a neural probabilistic language model in the early 2000s. Their idea was to learn distributed representations of words. Instead of treating every word as a separate symbol with no relationship to others, the model could learn that words used in similar contexts should have related representations. This helped language models generalise better.

Recurrent models and the bottleneck

Later, word embeddings such as Word2Vec made this idea famous: words could be represented as vectors, where relationships between words became mathematical relationships. Recurrent neural networks (RNNs) and long short-term memory networks (LSTMs) were then used for sequence tasks such as translation, speech and text generation. These models were important because language is sequential: the meaning of a word often depends on what came before it.

However, recurrent models had a bottleneck. They processed sequences step by step, which made training slower and made long-distance relationships difficult. A sentence can refer to something many words earlier. A document can connect ideas across paragraphs. Language needed a better way to look across contexts.

The transformer changed the speed and scale of language AI

Self-attention replaces step-by-step reading

In 2017, the paper “Attention Is All You Need” introduced the transformer architecture. The key idea was self-attention: the model can learn which parts of a sequence should pay attention to other parts. Instead of reading strictly one word at a time like older recurrent models, transformers can process many relationships in parallel.

This mattered enormously. Transformers were more efficient to train on modern hardware and better at capturing long-range dependencies. They became the foundation of modern large language models.

The pre-ChatGPT model wave

The early transformer era produced models such as GPT-1 in 2018, BERT in 2018, GPT-2 in 2019 and GPT-3 in 2020. BERT became influential for language understanding tasks such as question answering and text classification. GPT-style models became influential for text generation because they learned to predict the next token at a massive scale.

Before ChatGPT appeared, the world already had increasingly powerful language models, including GPT-3, LaMDA, PaLM, OPT, BLOOM and others. But many of them were mostly known among researchers, developers and technology companies.

The public moment

ChatGPT changed public awareness. It did not merely place a neural-network language model inside a chat window; it combined a large language model with additional training that made it better at following instructions, responding conversationally and aligning more closely with human preferences. Suddenly, the technology was not just in papers, APIs or demos. It was on everyone’s screen.

Why LLMs are different from older language models

More than a bigger dictionary

A large language model, or LLM, is not merely a bigger dictionary. It is a deep neural network trained on enormous text datasets to learn patterns of language. Compared with older language models, modern LLMs use much deeper architectures, far more parameters, much larger datasets and transformer-based attention mechanisms.

Representations, not just word counts

The difference is not only size, but representation. A traditional language model may estimate that one word often follows another. A neural language model learns internal representations of words, phrases, styles, context and statistical associations that can support fact-like recall and reasoning-like behaviour.

A transformer-based LLM uses layers of attention and feedforward neural networks to transform tokens into rich contextual representations. This is why the same word can be interpreted differently in different sentences.

Powerful, but not human understanding

This does not mean LLMs truly understand the world the way humans do. They can still make mistakes, invent facts, reflect bias and fail at reasoning. But their practical power comes from the same core idea that began decades ago: many connected units, trained on examples, adjusting weights until patterns are captured.

The invisible engine inside everyday AI

You see the tool, not the network

When someone uses a chatbot, searches for photos by typing “beach sunset,” asks a phone to translate a sign, accepts an autocorrect suggestion, or receives a useful recommendation, they usually do not see a neural network. They see an app that feels convenient. Behind many of those experiences, however, are layers of artificial neurons turning data into patterns, and patterns into predictions.

Why the hidden part matters

This hiddenness is useful, but it can also make AI feel like magic. Knowing that a neural network is inside does not explain every decision the system makes, but it gives us a better starting point. These tools learn from examples. They depend on data. They can be powerful, but they can also make mistakes, inherit bias and behave differently outside the patterns they were trained on.

The artificial neural network began as a simple mathematical idea. It survived doubt, slow hardware, limited data and changing research fashions. Today, it quietly powers AI tools that millions of people use without thinking about the machinery inside. That is why it remains the unsung hero of the AI revolution: not because it is loud, but because it is everywhere.