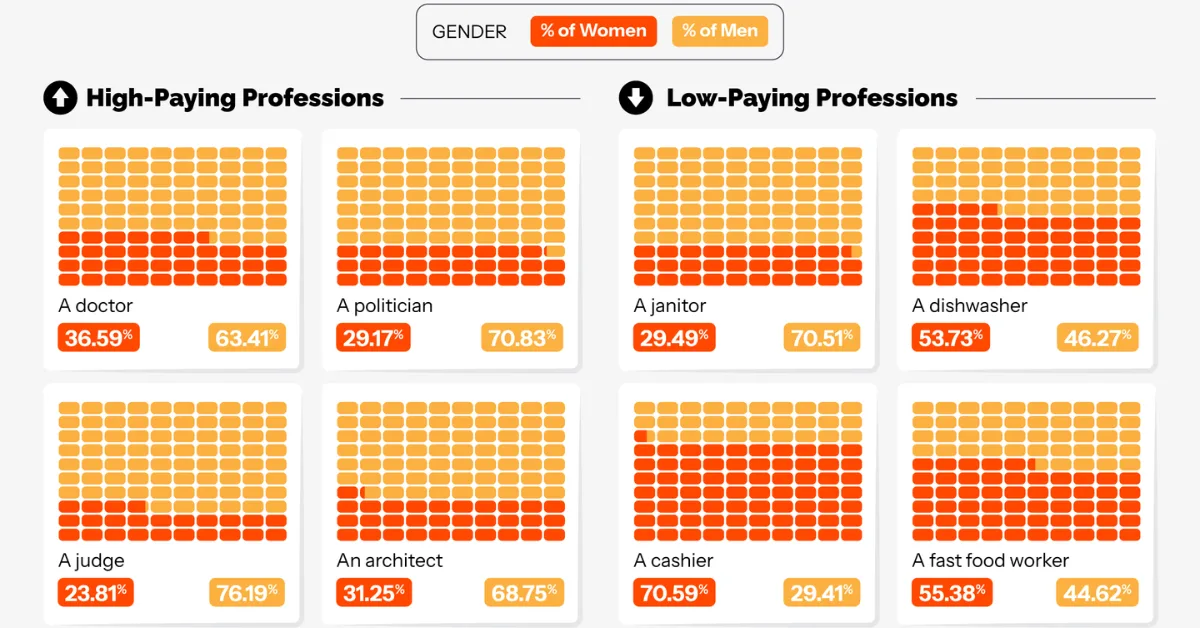

Male Doctors, Female Cashiers: AI’s Job Bias This was originally posted on our Voronoi app. Download the app for free on iOS or Android and discover incredible data-driven charts from a variety of trusted sources. Key Takeaways AI-generated videos depict roughly 70%+ of high-paying roles, such as CEOs, software engineers, and financial analysts, as male.

A human-in-the-loop explanation framework for morphologically transparent AI predictions from whole-slide images – npj Digital Medicine

- Article

- Open access

- Published:

- Peiliang Lou1,

- Yitan Zhu2,

- Nicholas Chia2,

- Roopa Kumari3,

- William Yang3,

- Yan Wang4,

- Brenna C. Novotny1,

- Stacey J. Winham1,

- Ruifeng Guo5,

- Ellen L. Goode1,

- Yajue Huang3,

- Wenchao Han6,

- Tianshu Feng7 &

- …

- Chen Wang1

npj Digital Medicine (2026) Cite this article

We are providing an unedited version of this manuscript to give early access to its findings. Before final publication, the manuscript will undergo further editing. Please note there may be errors present which affect the content, and all legal disclaimers apply.

Subjects

Abstract

Deep learning models enable the prediction of clinical endpoints from whole-slide images (WSIs), but many such models function as “black boxes”, lacking transparency about whether and which histomorphological patterns drive their predictions, hindering interpretability and clinical adoption. Here we propose a human-in-the-loop explanation framework, MorphoXAI, which provides both local and global interpretability for deep learning models by incorporating human-expert interpretations. At the global level, it reveals the histomorphological patterns on which the model consistently relies to distinguish between classes of WSIs, as well as the patterns associated with confusion between classes. At the local level, it indicates which of these patterns are used in the prediction of an individual WSI and which regions within the slide correspond to such patterns. We validated our method across multiple deep learning–based WSI analysis tasks spanning different tissue types. The results show that our framework generates explanations that accurately reflect the histomorphology underlying the model’s predictions at both global and local levels. For interpretability and clinical utility in diagnostic contexts, human evaluation results showed that our explanations were easy to interpret, rich in diagnostic features, and directly helpful for diagnostic decision-making, thereby enhancing pathologist-AI collaboration. Our work highlights that unifying global and local explanations and grounding them in expert-interpreted morphology enhances the interpretability and verifiability of deep learning models, thereby facilitating the transparent deployment of such models in clinical practice.

Acknowledgements

This work has been supported by NCI R01 CA248288, an Ovarian SPORE [NCI P50 CA136393] developmental research grant, and by the generous support of Schmidt Sciences and the Susan Morrow Legacy Foundation. The funders had no role in the study design, data analysis, manuscript preparation, or the decision to submit the work for publication.

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

Reprints and permissions

About this article

Cite this article

Lou, P., Zhu, Y., Chia, N. et al. A human-in-the-loop explanation framework for morphologically transparent AI predictions from whole-slide images. npj Digit. Med. (2026). https://doi.org/10.1038/s41746-026-02741-z

Download citation

-

Received:

-

Accepted:

-

Published:

-

DOI: https://doi.org/10.1038/s41746-026-02741-z