dominating the AI debate right now: that AI is going to replace all of us, that jobs will disappear within 18 months, that the collapse of the labor market is inevitable. Some say it with alarm, others, with enthusiasm. But almost no one stops to look at the real data. This first episode in the

AI Is Breaking Education. Rebecca Winthrop Has the Blueprint to Fix It.

Daniel Barcay: Hey, everyone. I’m Daniel Barcay. Welcome to Your Undivided Attention. So something I’ve been hearing over and over again lately is that we have to prepare our children for the AI future, but what does that even mean? Because yes, there’s a vision of the world where AI radically improves education. And I have to admit, there’s a part of me that’s really optimistic about this vision. I want to believe in that infinitely patient tutor who can sit, and watch every mistake that you make, and learn how to teach you in exactly the way that you need. I really want to believe in the promise of that. But the history of education technology tells us that these kind of simple, optimistic stories are often naive.

Ask any teacher or student whether they truly feel unleashed by technology to do their best work. And because AI has the potential to really transform education, we need to ask big and critical questions, like where should we embrace these powerful tools? What should we keep the same about the classroom? What’s the purpose of education in an AI age? And how do we prepare our students, our children, for a future that’s still so radically uncertain? Well, my guest today is Rebecca Winthrop, and she actually has some of these answers. She leads the Center for Universal Education at the Brookings Institution. And they just released a report called A New Direction for Students in an AI World.

They talk to educators, and students, and parents, and policymakers, and technologists all around the world about what the role of AI in education should be. And today, Rebecca’s going to walk us through what she’s learned, what’s working, what’s not, and most importantly, what are the concrete steps that parents, teachers, and administrators can and should take right now? The classroom is this crucible for how we integrate AI with society, and I’m really glad that there are people like Rebecca doing the deep work to make sure we get this right.

Daniel Barcay: Rebecca, welcome to Your Undivided Attention.

Rebecca Winthrop: Lovely to be back, Daniel.

Daniel Barcay: So when we had you on the podcast about a year ago, you were just getting started on this massive premortem of AI in education. And now, it’s over and you’re out with this report that’s like 200 pages long. Let’s start there, tell me, what does it mean to do an AI premortem?

Rebecca Winthrop: So we wanted to take lessons from the social media experiment with our children, because at the time that social media rolled out, educators, parents, social workers, mentors, people who worked with kids were not at the table. And we knew, when social media was being designed, that certain things are not good for kids and their development, because we do know a lot about children’s development. For example, we knew that social comparisons, particularly in adolescence, can be harmful. That is not a new revelation. And so fast-forward, what could we do today, now that AI is being rolled out, to get ahead of the game? And so we were really looking at, what are the possible risks and possible benefits? And how would one mitigate the risks and harness the benefits of generative AI and students’ learning and development? And we were really trying to ask the question, are we on the right track, currently today, and the direction we’re heading, or do we need to shift course?

Daniel Barcay: Okay, so I want to go into what you found in the report, but before we do that, I think we should lay out for people a little bit, what does AI look like today in the classroom? How is it being used already?

Rebecca Winthrop: Kids are accessing AI everywhere, in and out of school. So they’re accessing AI through social media, through AI companions, AI pops up when they do a Google search. They’re accessing AI through some ed-tech products. Now, teachers are using AI a lot to help prepare lessons, to find interesting activities, to grade students’ work and do different types of assessment, but it’s really mixed as to how kids are using AI in the classroom. What we do know is that kids are using AI outside of the classroom a lot.

Daniel Barcay: We had Ethan Mollick on the podcast a little while ago talking about AI and work, and he was calling it the secret cyborg phenomenon. Everyone’s using it, but using it kind of privately, and there’s no standards. They don’t even want to say that they’re using it. Is that what’s happening in the classroom? Everyone’s using it, but is sort of rolling their own, going their own direction?

Rebecca Winthrop: Yeah. And here I’ll make a distinction between what teachers are doing while kids are sitting in front of them during the school day versus what kids are doing on their homework outside of class and bringing to the classroom. So we know kids are using it in their schoolwork, we know lots of kids are using in their daily life for communication, for entertainment, for education, and that is all blurred together. Those clear boundaries between ed-tech, entertainment, communication are all mixed together now. And we know that kids are often using AI to do homework, including writing essays, running it through an AI humanizer, and then turning it in and not getting caught by their teacher. We had a number of kids in our interviews tell us that. So that’s what’s happening today.

And it’s true that kids don’t want to say they’re using it because a lot of times they’re not allowed to. But I will say, even teachers are not being totally transparent with their students about when they are using it. Daniel, one of the things that we found that was actually the most worrisome for me of all the risks that we uncovered, is a degrading of trust in the student-teacher relationship. We find that kids are becoming quite critical of teachers for using AI, even though it could help their learning. Again, with the sort of secret use, not being transparent, we cannot learn if there is not a trusting relationship to situate ourselves in.

We found that teachers are not really trusting their students, 50% of teachers say they don’t trust that what their students give them is actually their work. Incredibly difficult for a teacher to be able to teach and help students if they don’t know what they got wrong and what they got right. But also, it goes the other way around, 50% of students say they don’t trust their teachers. They think that their teachers are secretly using AI to do their lesson, grading their assignments, and it’s not really them putting in effort. And even when teachers use AI in a way that’s trying to be helpful to students, for example, giving them the opportunity to give feedback on an essay before turning it in, which a teacher wouldn’t have time to do, students interpret that as a lack of care. We’re also finding that parents are doing weird stuff. They’re running their kid’s assignments through ChatGPT, and if it gives a different grade, they’re showing up to the teacher and saying, “Hey, you misgraded my kid’s work.”

Daniel Barcay: Oh, you should have given… Yeah.

Rebecca Winthrop: I talked to a, this was at the college level, university professor who said, “I’ve never had this experience in my life. A student came to my office, was worried about her grade.” That part’s normal. “And proceeded to tell me that I was wrong and ChatGPT was right, she got her whole answers off of ChatGPT, so it had to be right.” So students are also trusting the authority of the chatbot over their human teacher, and we’re hearing that a lot, so this is very problematic. If you do not have trusting relationships in the teaching and learning space, you really can’t build much good quality education.

Daniel Barcay: I mean, I think that’s so important. The students not only feel like their teachers are losing trust in them, but feel like they’re being potentially accused of something that they have no possible way of defending.

Rebecca Winthrop: And there is plenty of false plagiarism accusations, because frankly, the software for catching AI cheating is not that super great, and it overaccuses neurodivergent kids, kids with learning disabilities, and multilingual kids, non-English-speaking kids, of using AI when they don’t, so it’s rife with problems.

Daniel Barcay: I think what you’re saying that’s so important is, regardless of the causes, trust in the classroom is such a precious commodity. I think what causes everyone to open up, to actually learn, to actually try-

Rebecca Winthrop: To listen, to take feedback, to engage, to pay attention, to care. Trust is something you don’t miss until it’s gone.

One of the things that we found that was actually the most worrisome for me of all the risks that we uncovered, is a degrading of trust in the student-teacher relationship…If you do not have trusting relationships in the teaching and learning space, you really can’t build much good quality education. – Rebecca Winthrop

Daniel Barcay: So moving to the conclusion of your report, what is the track that we’re on and how should we change that?

Rebecca Winthrop: The track that we’re on is not a good one. What we found is that currently, with AI implementation for students in education, the risks are overshadowing the benefits. And it’s not that there aren’t benefits, there are benefits for very narrow AI use, where teachers use it themselves to make better lessons, or kids have AI embedded in perhaps a technology that helps dyslexic kids learn, or educators can assess a wider range of competencies more frequently that helps kids learn. So a very narrow strategic use, with vetted content, and integrated into good teaching and learning approaches can be good. The issue is that the risks are of a very different nature than the benefits. The risks are undermining kids’ ability to learn independently at all, which they need to even take advantage of the benefits.

And the risks are often related to kids’ open-ended wide AI use, is a term you guys use at the Center for Humane Technology, sort of unscaffolded conversations with chatbots or AI companions for long periods of time. They’re sycophantic, so they socialize young people in a learning context to think they’re great and everything they do is great. So when you show up into a classroom and then you do poor quality work, it’s a real shock to kids. And we’re worried about kids losing that emotional muscle to take critical feedback, which they need to learn and grow. It’s also not safe. There are terrible cases on the margins, but this is really not good, they’re so extreme. The case of Adam Raine, who started using ChatGPT for homework help, and then got basically coached into committing suicide. So that alone is a problem, but all the other reasons make up a risk for kids for unfettered access to AI frontier model chatbots.

Daniel Barcay: In what ways does it interfere with kids’ ability to learn, is it just-

Rebecca Winthrop: Yep. So we really found several big ways. The first one is undermining kids’ cognitive development. So this is where kids are not just using AI to help them think critically or help them be more creative, but not as a cognitive partner, but a cognitive surrogate. So instead of them going through the thinking process and doing it themselves, AI is doing it for them.

Daniel Barcay: Right, like cognitive replacement. You don’t know how to do the thinking.

Rebecca Winthrop: Yeah. I mean, we use the term cognitive offloading because that is what people use in the literature and in the field. And in fact, I actually think for kids, cognitive offloading isn’t even the right term. It’s actually cognitive stunting, because kids aren’t even developing the critical thinking and learning skills to offload in the first place. So when you assign an essay to a child, a student, they have to think through, what is the data? What is the evidence? Ooh, how does it stack up? Is there a side of the argument that data sits on that isn’t? How do I make a persuasive argument that uses this data and have a position? Those are hugely difficult skills to develop, and they come through practice. And if you stick in a couple sentences into a chatbot and have it write the essay for you, kids aren’t just merely skipping a couple steps in their homework and being more efficient, they are missing the opportunity to develop their own personal independent thinking skills.

Daniel Barcay: Well, and that brings up a whole other conversation, because it’s not just that the tool isn’t doing the right thing, it’s that we put kids into this weird game theory. I hear from kids all the time and college students, high school students who say, “If I don’t use AI to write my essay for me, then I’m just going to lose out to the kid next to me who will.” It almost feels like Lance Armstrong and bicycle doping for me.

Rebecca Winthrop: Totally. Totally. One of the students I talked to in this journey was at an Ivy League institution, I will not say where. She was a freshman, and she said, “I’m getting a C.” It was a particularly difficult class for her. “I’m getting a C, and I’ll take this C proudly because I’m learning this stuff, I’m doing the work, and all my other peers are using AI and getting A’s.” Then she paused and she said, “But I’m not sure how much longer I can do that because I do want to go to grad school and I’m going to need good grades.” And she’s a really committed, motivated learner. She was there to learn, not just breeze through and get the credential. It’s causing all sorts of problems student to student.

Daniel Barcay: Yeah, so you’ve talked to hundreds of students as part of this. Tell me other stories that stick out to you.

Rebecca Winthrop: Students are really aware of the risks around cognitive stunting. Let’s just call it cognitive stunting for ourselves here on this podcast. They don’t use those words, but it was the number one thing they were most concerned about, was making them dumber.

Daniel Barcay: So this isn’t just adults looking at kids saying-

Rebecca Winthrop: They feel this. And in fact, this has been repeated. A recent survey just came out by Comic Relief, a UK organization around the globe, and it was of young adults. And the number one thing they worry about isn’t the job market and not getting a job because of AI, it’s stopping being able to think well. So kids often say things like, “I’ll use it when I already know the material. I got this.” So I’m just like, “Ugh, busy work, I’m just going to use it.” That’s your motivated student. Or students saying things like, “I’ll use it, but now I’m getting a little worried because now I can’t start any homework on my own.” And so the ability to initiate without it, kids are really saying they’re struggling with that.

Daniel Barcay: Okay, so I imagine there are a few listeners hearing this that sort of feel like… Doesn’t this just sound like math teachers in the 70s and 80s talking about calculators?

Rebecca Winthrop: With the calculator?

Daniel Barcay: Yeah. So tell me, why doesn’t that metaphor work for you?

Rebecca Winthrop: Oh my gosh, I can’t tell you how much this metaphor makes my head explode. So first off, let’s start, let us count the ways, Daniel. Number one, calculator cognitively offloaded, initially, arithmetic, which is one small slice of mathematics, a few algorithms. The calculator did not go and do your English homework. It did not do all your coding for you. It did not create beautiful pieces of art. It did not create music. It did not talk to you like a person and then guilt trip you if he wanted to stop talking to you. It is completely different. It is not like a calculator at all because of its general purpose nature.

And it is so powerful, it is incredibly seductive to kids to stop the learning process itself. Calculators did not stop the learning process. It probably made it so kids don’t know their base arithmetic as well, although every math teacher will tell you, they did teach kids the basic arithmetic, and math, and division, and multiplication, and whatnot initially because you have to have knowledge in you. You can’t be creative unless you have knowledge in you. Domain expertise and knowledge of students is heavily correlated with their creative thinking.

Daniel Barcay: So you mentioned in there the attachment, right?

Rebecca Winthrop: Mm-hmm.

Daniel Barcay: And we just had Zak Stein on the podcast talking about how the competition for AI is no longer just attention, it’s attachment. Can you talk to how that shows up in the classroom? How is it that students are getting these tools attached?

Rebecca Winthrop: Yes. Well, and remember, we were doing “a premortem exercise,” so we were looking at what we know about students’ learning and development vis-a-vis how this technology is being rolled out. And one of the things we know about student learning and development, is that young people learn in relationship to other people. We have evolved as a human species that way, so learning is fundamentally a social exercise, it’s also an embodied exercise.

It’s why we remember, when we’re reading a print book, the page that a special passage was on, because it’s in 3D, and we’re hardwired to remember things in 3D, and why we don’t necessarily remember the page when we read it online and we’re just scrolling, there’s no space there. But even more than that, young people learn with other people. They learn through back and forth exchange from the minute a mother or a father and a child start their relationship. That’s the same type of back and forth that happens in a classroom. So you need to be able to take feedback as a learner, that is how you learn. You learn from someone saying, “Oh, that’s not quite right. That didn’t quite work out, let’s try-”

Daniel Barcay: You mean taking a social risk, and getting up in front of the blackboard, and trying to do something-

Rebecca Winthrop: But even I hear that I was wrong, and I will take that in, and I will pivot, and learn, and try it a different way. And learning is based on feedback and mistakes. And what we worry about is the sycophantic nature of AI companions, for example, building an emotional social muscle in kids, where they’re always agreed with, that they’re less able to take feedback and make mistakes and recover in a classroom setting. And that will really undermine learning.

Daniel Barcay: I think this is so critical, right? People think that the classroom is a place where you go to get information, but it’s not, it’s this social crucible that you’re building, right?

Rebecca Winthrop: Yes, yes.

Daniel Barcay: And I think I worry that the endpoint of personalization, you keep talking about personalizing learning, but the more you personalize learning, the more you make it lonelier and lonelier, to the point where, and I think this is what you’re saying, is that part of what makes things stick in our mind is the social context that we’re in while we learn it.

Rebecca Winthrop: And the relationships, the fact that we feel we belong, we have a trusting relationship with our teacher, we feel seen. All those things make a huge difference in kids’ learning outcomes actually, because you learn in relationship to other people. And you’re totally right, Daniel, the classroom experience is not just to pass academic information from adults to young people. There are many other purposes and things that are going on for learning in a classroom. Self-regulation, kids realizing they can’t just do whatever they want whenever they want, perspective taking, you’re in a classroom with a bunch of other kids that aren’t necessarily your family or your neighbor. That’s a crucial foundational skill to learning, and life, and work.

“What we worry about is the sycophantic nature of AI companions, for example, building an emotional social muscle in kids, where they’re always agreed with, that they’re less able to take feedback and make mistakes and recover in a classroom setting. And that will really undermine learning.” – Rebecca Winthrop

Daniel Barcay: Okay, so let’s ground this a bit. So how does AI actually interfere with that? And to the point of your rapport, how do we make sure that it doesn’t interfere with that?

Rebecca Winthrop: So one of the things that we’re worried about is making sure that we can maintain classrooms to be as human as possible. Given that the world outside the school is flooded with all types of different technology and it’s a little harder to wrangle, can we, my colleague Jon Valant and I say this, can we make a commitment to the kids who are in school seven hours a day, whatever it is, 40 weeks a year, that it will be as human as possible? There will be time when young people are working eye to eye with each other and with adults. There will be time when they’re learning content, and they’re going together, and trying to solve a problem with that academic knowledge and content that they have to collaborate on. So we want to make sure that AI and technology generally doesn’t interfere with that time. It doesn’t mean not introducing it at all, it just means trying to safeguard the human to human social and academic interactions.

Daniel Barcay: And so help me, because I have to admit, there’s a part of me that is just wildly optimistic. I really want to believe that the “infinitely patient tutor,” who can sit and watch every mistake you make and remember and say, “Okay, oh, this is why you’re getting this wrong.” And teach to you this. I want to believe in the promise of that, but I also believe in all the risks you’re saying, so is there any-

Rebecca Winthrop: Is there a balance?

Daniel Barcay: Yeah.

Rebecca Winthrop: Yes, absolutely. Absolutely, there is. So we have to distinguish between a couple of things. One is teacher’s use of AI versus student direct use. Two is sort of wide AI use, where kids are just interfacing with frontier models, AI chatbots that are not designed or optimized for children or learning. Versus interfacing with some other type of technology that can really support and scaffold them, likely in partnership with a teacher. All of those are different scenarios.

Daniel Barcay: So start with the teacher’s use of technology. Earlier you talked about a loss of trust because teachers are using this and then kids are realizing, “My teacher’s using AI.” What should a teacher’s use of AI look like?

Rebecca Winthrop: I think one of the big benefits, we talked about AI dividend for teachers, is for educators in their administrative work. Educators have a ton of administrative work. It’s also really helpful for educators to use when they think about, how do they make slightly different reading levels for the different-

Daniel Barcay: Yeah, for all the different kids in the class who-

Rebecca Winthrop: Different kids, because any fourth grade teacher might have kids at a second grade reading level, all the way to a sixth grade reading level, right? So all of that stuff is what I would call back office use. It’s not showing up necessarily in front of a kid and a screen, and it can be really helpful. And so that is good. There are student-centered, student-facing AI uses, which I think can be great. And this is when I’m talking about AI is being used in a very narrow, strategic way in the classroom. It could be you put on a pair of virtual reality goggles, and now with AI, you can make it a lot more interactive. You could be looking inside a cell, if you’re studying biology for 10 minutes of a bio class, and you could be interactive, “What is that? Move that here. Explain to me this.”

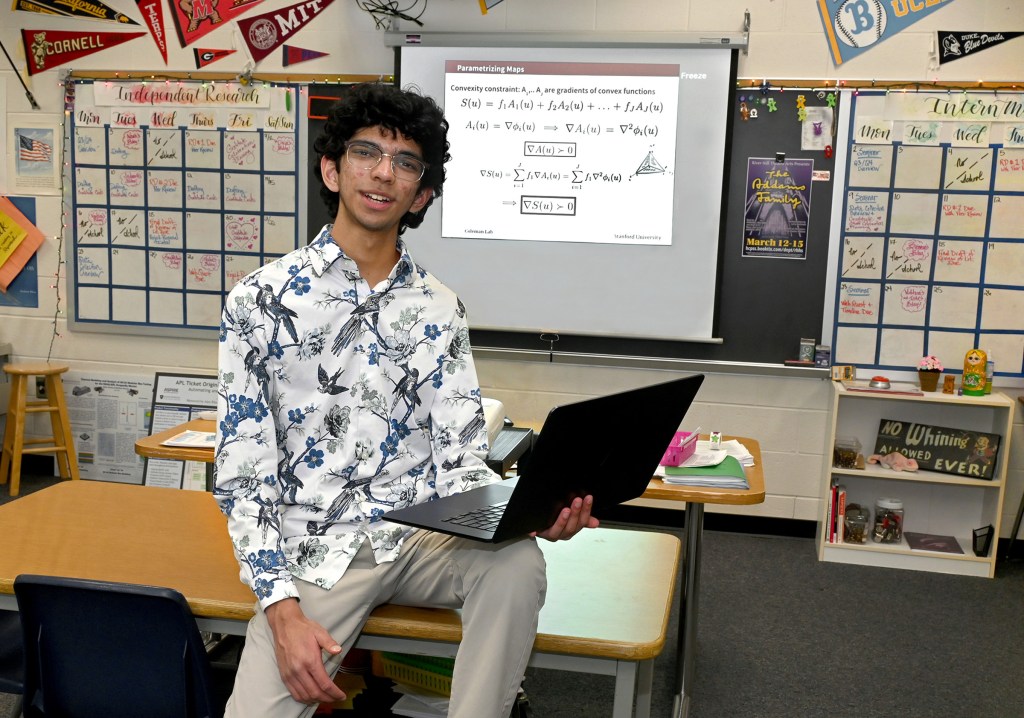

It could be really illuminating. We know it has a lot of potential. And then they put the headset away and then they’re onto their rest of their biology lesson. That’s a great usage. Or things like tutoring, another great usage. This is online tutoring for kids who are really far behind. Stanford has done some great research, where you are on Zoom, kid to tutor, and the tutor is using AI to try to pick up on where the kid is misunderstanding, and feed that information to the tutor who might not catch all of it. And that is really helpful, especially for novice tutors, new tutors who aren’t as sophisticated. So things like that can be quite impactful.

“Can we make a commitment to the kids who are in school seven hours a day, whatever it is, 40 weeks a year, that it will be as human as possible?…It doesn’t mean not introducing it at all, it just means trying to safeguard the human to human social and academic interactions.” – Rebecca Winthrop

Daniel Barcay: Right. And all those promises seem wonderful, but somewhat dreamlike in a sense. Ground it for me, what’s the difference between the way a teacher you see in the classroom using AI right now in a way that you would consider risky or unhealthy and how they should be using it?

Rebecca Winthrop: I mean, to be honest, part of the thing I see is teachers aren’t addressing the fact that AI is there and is being used. And so it’s this pretending, and we’re going to continue to teach the same way. And meanwhile, homework is being hacked by kids with AI and/or if they have particularly one-to-one laptops, one kid told me, “Oh yeah,” this is a school with one-to-one laptops, “I have my assignment up on one half of the screen, and I have ChatGPT up on the other, and I just take it and copy it.” And they might adapt it a tiny bit so it doesn’t get flagged, and puts it in the homework assignment. So it’s basically when teachers are not adapting the way they’re teaching, to recognize that kids will… Just assume, if kids can use AI, they will use it.

Daniel Barcay: This brings up a whole other topic that we need to talk about, which is assessment. It feels to me like assessment’s just fundamentally broken. The way we do tests, the way we do essay grading, the way we do assignments, it feels completely busted. And doesn’t it seem a bit high-minded to tell a teacher in the classroom to figure out a completely new way of assessing their students? What do we do about-

Rebecca Winthrop: Well, look, I think that my advice to teachers, and I get asked all the time, is two things. If your school doesn’t have it, create a little AI council in your classroom, and have a couple of kids be on it, and show them the assignments you’re going to give beforehand, and have them tell you how they would get around it.

Daniel Barcay: Oh, it’s like have your kids red team your assignments, have them try to break your assignments?

Rebecca Winthrop: Have your students red team your assignments. And if you can hack it with AI, do not assign it, come up with something else. Number two is your point about assessment. At the moment, given that AI is everywhere, I think actually in-class exams are a pretty good idea. I think oral presentations are a pretty good idea. And when I say in-class exams, exams where you can’t have GPT open on one half of the screen and the other. Or maybe written, there’s a problem, we’ve stopped teaching handwriting to kids, so they can’t write down. But in class, presentations, exams are a good idea. I do think that there is ways that educators can use AI, where students are using it to do much more rigorous and advanced work. However, again, it does not look like sitting kids in front of laptops with chatbots, and that they’re just tooling away unscaffolded, open-ended.

I’ll give you an example. There’s a school in Hawaii, which is a middle school, that is a public charter school, and they’ve gone all in on AI, but they do not have kids sitting in front of chatbots. They teach them machine learning. They teach them data science. They also have double periods in reading, and math, and lots of outdoor extracurricular activities, so they’re holding the human space. And in their science projects, for example, one project is looking at sea level rise, they are regularly outdoors measuring sea levels in their communities. And they go, and they take the data, and they put it in an AI application, and they do much more sophisticated, rigorous analysis with it. So they’re learning to use AI as a analytical tool to further their investigation.

Daniel Barcay: Right. Which seems like the way that we want them to use it and the way that we want ourselves to use it.

Rebecca Winthrop: Exactly. That is a good use.

Daniel Barcay: So I imagine people listening to this might say, “Yeah, but there’s a bunch of tools that are being developed.” ChatGPT now has a study mode and there’s other… Tell me about your thoughts on that one.

Rebecca Winthrop: Oh my God, don’t get me started on these things. That’s fine, have your study mode. I’m not begrudging all the frontier models who make the education version of your chatbot. The issue is to assume that students are going to be able to log on and have the normal frontier model chatbot, who will give you all the answers. Versus the broccoli, which is the study mode, and they’re going to choose the broccoli. I think it’s a fundamental misconception of virtually every technology company I’ve run into, the large scale technology company who designs for students, that you’re designing for kids who are motivated, highly motivated, probably because the designers and the developers were motivated students. That is not most students. Most students are in what we call passenger mode, in our book, and they are looking for the shortest way out, so be realistic.

Daniel Barcay: Okay, but then what does good look like? I mean, I’m seeing different boards of education try to release their own AI chatbot and force students to use it, or what would you recommend?

Rebecca Winthrop: Right. In terms of what we have to do to move in a positive direction, we found that there’s really three big things, we call them the three Ps, prosper, prepare, and protect. They are shift what teaching and learning looks like in school, prepare people through holistic AI literacy, and put in safety regulation and guardrails. So one thing is I would really think twice about having one-to-one laptops in the younger grades, for sure. Elementary school, possibly middle school, because kids can get around any block that the teachers put in. And for all the reasons we talked about, for the cognitive social-emotional development, they need to be interacting with others, paying attention, presenting, speaking. That’s a way to learn something, teaching it back to another peer.

Second, I would absolutely, absolutely go deep on what we’re calling holistic AI literacy. Here’s what AI is. Here’s how it’s made. Here’s what it is and isn’t. Here’s why it hallucinates. Here’s how you have to think about the ethics behind it. Here’s how you could create things that you care about, that you want to do in the world. And how you could use it wisely, how it could help you. And have real discussions. Kids are craving this. This is one thing we found. Kids are craving talking with adults about this stuff.

I talked to one sixth grade teacher who said, “I do AI literacy…” And you can do AI literacy without any screens in front of you, by the way. She starts in sixth grade, says, “I do AI literacy by having my students write an essay. They write two essays. They start with, what are you most worried about and what are you most excited about AI?” And they just get it all out, and they are aware. These are sixth graders. AI might end the world, was one answer, but it’s helpful to check my spelling or to help me with my essay. So they have opinions.

Daniel Barcay: If there are concrete recommendations for educators or technologists who are making this next generation of AI-enabled ed-tech, what are they?

Rebecca Winthrop: What I think the good will be in the future, and some people are experimenting with this, is when you don’t even know AI is there. So there’s early days where you have online, for example, for high school students and college students, textbooks, science textbooks, and they’re digital, and kids are interfacing with the material, but it’s AI embedded. And when a kid reads through a particular paragraph and just has read through it twice, doesn’t understand it, can go in and say, “I just read this twice. I can’t understand it. Can you explain it to me a different way?” That is a great example of AI use.

Kids don’t even know it’s there, there’s no sort of separate chatbot application you need to go to. It’s underneath, it’s behind, and the content and the learning experiences are out front. Similar to this idea of interactive virtual reality, or helping neurodivergent kids or kids with learning disabilities really access material that they couldn’t otherwise have. Dyslexic kids are doing text to speech, which has been around for a long time, but now much more interactive with generative AI, can really help them accelerate their learning process. You could use AI tools in the case of the school in Hawaii who is teaching their kids machine learning and data science. They’re plugging it in, but that is one piece of a much broader educational learning experience.

Daniel Barcay: I really like this vision, where the AI disappears into the background and just empowers both students and educators to do the cognitive work that they’re there to do.

Rebecca Winthrop: That’s right.

Daniel Barcay: But we see the incentives pointing in this other direction. I mean, to your point, the train tracks that we’re currently going on are towards just throwing more general purpose chat interfaces at students for grades and essays.

Rebecca Winthrop: Bad idea.

Daniel Barcay: Yeah, so how do we shift that? How do we end up at that different future?

Rebecca Winthrop: So my co-authors in our steering group, we had a big debate about, “Ooh, looks like…” Because we weren’t sure what we were going to find. “Ooh, looks like we’re heading down the wrong direction and the risks are really overshadowing the benefits. What do we have to do to bend the arc and move in a different direction?” And there are really three big things that we came up with. Number one is we have to shift what teaching and learning looks like, so it’s not hackable by AI, and it is really helping kids build the skills they need to be explorers and thrive in an AI world. The second thing we need to do is really help prepare the people, including students, but especially the people, educators, school leaders, district leaders, to understand what AI is, what it isn’t, what to be aware of, how to use it well, and what to avoid.

This is this idea of holistic AI literacy. And I would actually add families in there. There was a big gap we found. So much of the problem with AI, at the moment, hurting kids, social, cognitive, and emotional development, is from sort of extended wild west AI use outside of school. So we need to bring families into the picture for holistic AI literacy. And then thirdly is we need safeguards. Kids shouldn’t be accessing frontier model chatbots that are unsafe for them. There should be duty of care laws. You should have regulation by design. School districts and states should band together and use their purchasing power to say, “We will only purchase AI safe for kids. Products that have X, Y, and Z design features, so that there is a market to drive safer AI products.” So those are the three big things that we need to do to bend the arc.

Daniel Barcay: So clearly schools aren’t just about information, they’re about socialization, they’re about coming together and learning all of the skills that it takes to be an adult. And clearly, AI changes the nature of this game, but again, if you’re running a school, if you are a superintendent who just feels like they need to introduce AI tools or get left behind, what are their choices? And how do you help people make different choices?

Rebecca Winthrop: The one thing I would say is don’t be pressured. There is a superintendent I’ve exchanged with recently who said, “My motto is we are going to go slow to go fast.” And he said, “Before I start procuring AI tools and rolling them out, I don’t even understand it that well. And my staff, the teachers, the school leaders, everybody in the district, we don’t really understand it that well.” And he really was strong about it. And I think that’s the right approach. Figure out where it could help, who needs to build their capacity, in order to do it effectively. And it could be everybody. In fact, I think it is everybody, also students, also parents. So you really need to build that awareness, and then you can lean in and be very judicious, and careful, and find how it could empower and support kids flourishing.

Daniel Barcay: I love that, but also I’m not sure it’s a full answer because even when you’re a full-time job and my full-time job is to understand the developments in AI, and even I feel like I’m perpetually behind. Things come out every week, every month, and people say, “Have you seen this?” And I’m sort of saying, “No.” And yet, I don’t have any other job, this is my job, is to stay on top of it, right?

Rebecca Winthrop: No, it’s fair. It’s fair. But you’ll be surprised how little people know. I don’t think you need everybody in the school building to be an expert. There’s a great school district who came up with this analogy of, we need everyone to be able to swim in an AI world. We need basic swimming. Everyone needs to swim. Some people will have to snorkel. Maybe that’s the chief technology officer of a school district. Some people will be scuba divers, and those are the developers, but we need everybody to swim. So we don’t need everybody to be gurus, but you’d be surprised how little understanding there is of what AI even is, that you shouldn’t put all your personal information in a freebie version of a frontier AI lab chatbot. It’s not necessarily safe. Basics that you and I might think are basic, people don’t totally understand.

Daniel Barcay: If you’re a parent who’s trying to start that conversation, a parent, a teacher even, how would you begin the conversation with your child about, what is this doing to us and how do we choose a better path?

Rebecca Winthrop: I’m so glad you asked this because one of the things we’ve just started to do at Brookings, and they’re free, they’re available on the website, is parent tip sheets. Because we found in our research, there was such a gap in AI literacy for parents, or even understanding how their kids are accessing AI, or what it is, and so people should check those out. And one of the things we start with is just having an open conversation with your… We made these for your 10 to 14-year-olds of, “Hey, have you heard of AI? Do you know what it is? Where do you think you run into it? Do you know any friends who use it?”

You don’t have to ask them direct, if you are worried about them being squirrely, do you use it? Although, often your 10, 12, 13-year-old will tell you, but say, “Do you know if any friends are using it?” They might not even know where they are interfacing with AI. And so that is the very first thing to do, non-judgmental, just get a baseline on how much they know, and then you can start talking about what it does do, and what it’s good for, and what it’s not good for.

Daniel Barcay: So hearing you talk about this, what’s funny to me is it seems almost as touchy as the sex or drugs conversations with kids. It seems like you’re saying-

Rebecca Winthrop: It’s like, “Have you ever smoked marijuana?” Yeah.

Daniel Barcay: Right, right.

Rebecca Winthrop: The reason I’m suggesting going in with a very open, non-judgmental stance, is that schools have banned AI. Kids know they’re maybe not supposed to use it.

Daniel Barcay: But they feel like they have to.

Rebecca Winthrop: And they’re feeling pressured like they have to, or they’re curious, or they’re using it and they don’t really understand, especially younger generation. And you really want to have an open communication pathway, that is just well established in the parenting adolescent development literature. That you need open lines of communication about everything, whether it’s drugs, or relationships, or friendship problems, or cheating, or whatever, including with AI.

Daniel Barcay: So much is changing about what we’re preparing kids for, we don’t even know… We had a whole set of episodes on the impact of AI on jobs. We don’t even know what careers are going to get disrupted. It seems like a bunch of them. There’s a question about, what is the world that we’re preparing our kids to actually inhabit? And what are the skills that are necessary for that world? What are you seeing educators do to try and prepare for this change? And what would be your recommendations? What should they do in a time of such transition?

Rebecca Winthrop: My main recommendation about, what do we need to help young people be able to know and do, is to make sure they master content knowledge and they master a love of learning. I think that the young people who are going to sail through this very, very complex, uncertain time, are the ones who are going to be super motivated and super engaged in learning new things. And in my book with Jenny, The Disengaged Teen, we talk about explorer mode. And less than 4% of kids, I think I’ve told you this before, Daniel, say they are regularly in the explore mode in middle school and high school.

Daniel Barcay: And just because people might not have heard that, I mean, if they didn’t listen to the last podcast, explore mode for you is just as curious, connected.

Rebecca Winthrop: When kids are in explorer mode, they are resilient, they love learning, they are looking at the journey of the learning, rather the outcome like, “Ooh, I need to get an A on this.” If you’re in explorer mode, you’re not really actually that worried about generative AI, because you’re in it to learn and try to figure something out, and you bounce back from setbacks. So this love of learning and ability to learn new things is explorer mode, and kids need to practice learning new things and being super engaged and motivated. It is something we can develop in them, it isn’t just something that kids either have or they don’t, all kids can have this ability to learn new things.

So content knowledge, being in explorer mode, ability to learn new things, and a strong ethical orientation. What is the world I want to live in? How should I treat my friends? How should our communities treat each other? These are really important things that AI is not going to answer. We, humans, are the ones who are going to have to point this technology towards the goals we want. And the more young people feel like they’re in the driver’s seat and equipped to chart the world we want, the better off they’ll be.

Daniel Barcay: I mean, I love this answer because I’ve often thought that the answer to the question, what are the skills that you need for an AI age? They’re not actually more technical skills, there’s actually some of the most deeply human things is, curiosity, like you’re saying, intellectual humility, determination, sociality, even emotional things around being able to hear and respond to feedback. I mean, it seems like you agree that a return to the focus on the most human skills will serve us in the AI age.

Rebecca Winthrop: Absolutely. Absolutely. If we dispense with those and we lean in merely on technical proficiency, the technology’s changing so fast, that’s going to be obsolete. In two, three years, we’ve got quantum coming, we’ve got embedded AI in our clothes and our glasses, we’ve got embodied robotic AI. So we really need young people to be sort of ethical, grounded, lovers of learning new things.

Daniel Barcay: I really applaud your work in trying to present some sort of guardrail, some sort of roadmap for, how do we do this well, before we look back and say, “Oh, we didn’t do that well, we did that much more poorly.” Thank you so much for your continued work and thanks for coming on Your Undivided Attention.

Rebecca Winthrop: Thank you. Thank you for having me.

RECOMMENDED MEDIA

A New Direction for Students in An AI World

Rethinking School in the Age of AI

Attachment Hacking and the Rise of AI Psychosis

How OpenAI’s ChatGPT Guided a Teen to His Death

AI and the Future of Work: What You Need to Know