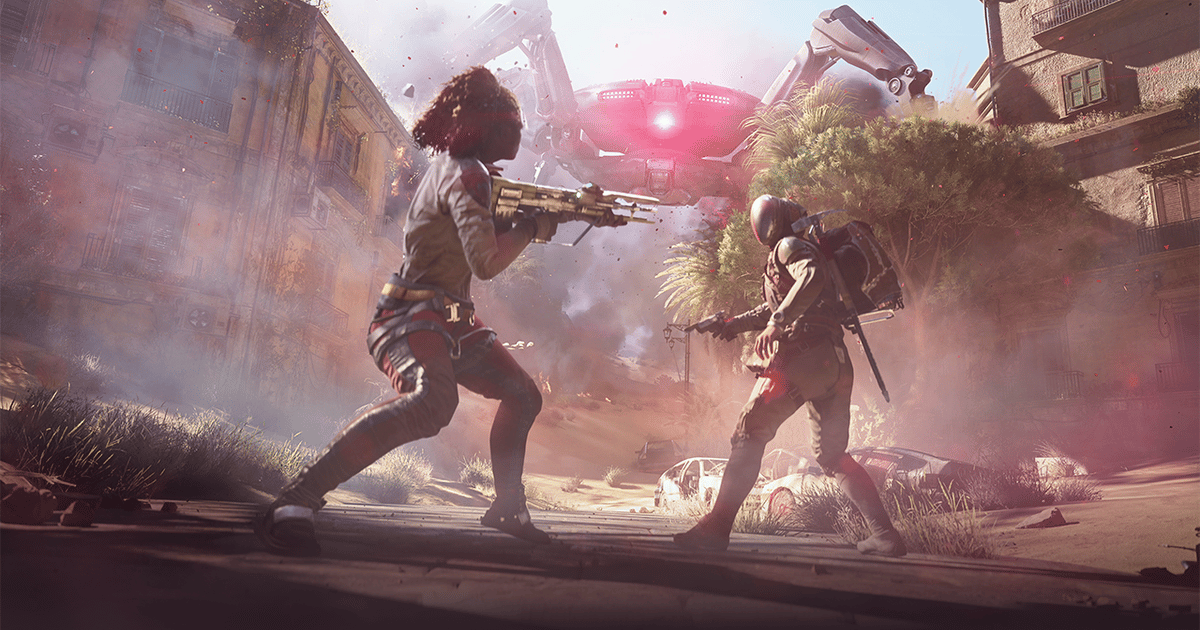

Sony’s gaming division just bought an AI startup that turns photos into 3D volumes

Sony Interactive Entertainment, owner of the PlayStation brand, has acquired Cinemersive Labs, a UK startup developing tools to convert 2D photos and videos into 3D volumes. The startup team will join Sony's Visual Computing Group, a research engineering team focused on graphical technology, including game rendering, video coding and generative AI models. Cinemersive's most recent