The grounds have shifted the foundations of academic core facilities and the current climate demands their strategic agility in order to thrive. Boyd Butler at Molecular Devices reveals how these labs can capitalise on this opportunity to increase value and efficiency. Academic core laboratories are at an interesting inflection point. Once considered subsidised institutional necessities

How to Create Music Visual with a Top AI Audio Visualizer in 2026 – Technology Org

Historically, if an audio engineer or digital artist wanted to generate visual for song outputs, they relied on hard-coded spectrum analyzers. These early scripts simply mapped audio frequencies to moving geometric shapes. Today, artificial intelligence has completely re-engineered this process. Driven by advanced latent diffusion models and neural networks, modern software can now translate soundwaves into highly complex, photorealistic cinematic narratives.

However, the computational challenge of syncing generated video frames to audio transients remains significant. Before a generative engine can even begin to render a frame, it relies on advanced tempo-detection protocols—functioning much like a high-precision BPM finder—to map the internal mathematical architecture of the track. Once this rhythm data is extracted, the challenge is finding an AI Music Visualizer capable of executing rendering cuts perfectly on the beat.

To help technologists and digital creators navigate this evolving software landscape, we have analyzed the top five platforms of 2026. Here is a technical breakdown of the engines defining the modern AI Audio Visualizer space.

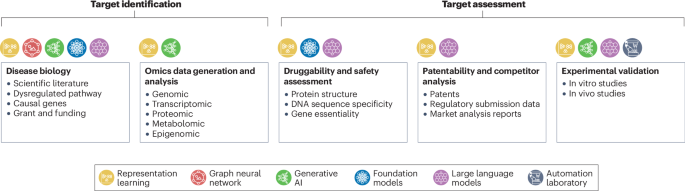

2026 Computational Video Rendering Matrix

| Platform | Core Algorithmic Model | Audio-Reactivity Source | Spatial Consistency |

| Freebeat | Audio-Guided Neural Rendering | High(Frame-level beat & energy synchronization) | High (Character/Lip-Sync) |

| Neural Frames | Audio-Reactive Deforum Diffusion | Extreme (Stem-Level FFT Data) | Low (Constant Morphing) |

| Kaiber | Amplitude-Driven Video Diffusion | Basic (Master Output Energy) | Moderate (Stylized) |

| Luma Dream Machine | Transformer-Based Video Generation | None (Deaf to Audio) | Extreme (Photorealistic) |

| Runway Gen-3 | General Video Foundation Model | None (Deaf to Audio) | Extreme (Physics-Accurate) |

- Freebeat

For creators demanding precise synchronization between audio transients and video frame rates, Freebeat operates as a highly sophisticated Audio Visualizer. Rather than just reacting to master volume, its underlying architecture utilizes deep waveform analysis to automate the editorial process. It represents the current technological benchmark for an AI Audio Visualizer designed specifically for rhythmic accuracy.

- Strength: Superior algorithmic synchronization. The engine actively parses the track’s tempo and structural drops to automate scene transitions without manual rendering. Additionally, its deep phoneme-recognition model delivers >90% lip-sync accuracy, anchoring digital avatars firmly to the vocal track.

- Weakness: The computational rigidity required to maintain perfect beat-syncing means the platform is less flexible for generating completely unstructured, chaotic visual noise.

- Best For: Digital musicians, A/V technologists, and producers who require a mathematically precise, broadcast-ready visual release with zero latency between the kick drum and the visual cut.

- Neural Frames

Neural Frames approaches video generation through the lens of pure audio data. It is a highly specialized AI Music Visualizer that utilizes Fast Fourier Transform (FFT) algorithms to isolate specific frequencies in a track and map them directly to visual prompts.

- Strength: Unmatched stem-level reactivity. Technologists can isolate the frequency of a snare drum or a sub-bass and command the AI to generate localized visual anomalies (like color shifts or geometric fractal growth) exclusively when those frequencies trigger.

- Weakness: The latent diffusion model prioritizes reactivity over spatial consistency, leading to heavy “hallucinations.” It lacks the neural mapping required for lip-syncing or stable human narrative generation.

- Best For: EDM producers, VJs, and technical sound designers who want to create hypnotic, data-driven visualizers that map directly to the physics of their sound waves.

- Kaiber

Kaiber utilizes an amplitude-driven diffusion architecture. It is widely recognized as a highly accessible AI Audio Visualizer that translates simple text prompts and audio uploads into fluid, stylized animations.

- Strength: Excellent stylistic rendering and a highly optimized graphical user interface (GUI). It rapidly processes complex artistic prompts (e.g., “cyberpunk watercolor”) and binds the intensity of the visual generation to the overall decibel output of the track.

- Weakness: It lacks transient awareness. Because it reacts to general master energy rather than precise BPM mapping, it often misses rapid drum fills or complex rhythmic structures. Its diffusion model also suffers from heavy spatial morphing over extended durations.

- Best For: Creators generating highly stylized, short-form looping assets or social media visualizers where algorithmic beat-precision is secondary to artistic aesthetic.

- Luma Dream Machine

Luma Dream Machine is built on a massive transformer-based architecture capable of generating incredibly photorealistic, physics-accurate video outputs. While not natively an AI Music Visualizer, its rendering capabilities make it a massive asset in A/V production.

- Strength: Unprecedented graphical fidelity. The model computes light tracing, camera physics, and environmental textures with near-perfect realism, delivering cinematic footage that challenges traditional CGI.

- Weakness: The neural network is entirely deaf. It accepts zero audio parameters. To generate visual for song timelines using Luma, users must export silent video files and execute the audio synchronization manually within a non-linear editor (NLE) like Premiere Pro.

- Best For: High-end VFX artists and video editors who possess the technical proficiency to manually sync photorealistic AI-generated clips to external audio tracks.

- Runway Gen-3

Runway Gen-3 functions as a foundational video generation model. It offers granular algorithmic control over camera trajectories, depth of field, and subject motion.

- Strength: Advanced spatial controls. Directors can input precise mathematical parameters for camera tracking, panning, and zooming, ensuring the generated assets look like they were filmed on a physical cinematic rig.

- Weakness: Much like Luma, Runway’s current API does not support native audio reactivity. Utilizing it as an AI Audio Visualizer demands extensive manual post-production to ensure the visual elements align with the audio’s rhythmic data.

- Best For: Professional video engineers and cinematographers who need to synthesize high-budget B-roll footage and are equipped to handle complex post-production editing workflows.

Conclusion: Automating the Audiovisual Pipeline

The intersection of machine learning and digital audio has fundamentally altered how we Create Music Visual media. In 2026, the distinguishing factor between competing AI models is no longer just visual quality, but audio-computational intelligence.

While foundational models like Runway and Luma push the boundaries of photorealistic generation, they still require exhausting manual alignment. For creators looking to truly optimize their workflow, adopting a dedicated AI Audio Visualizer like Freebeat—which computes BPM, transients, and lip-sync data natively within its rendering engine—bridges the gap between complex code and seamless digital art. By leveraging these rhythm-aware algorithms, technologists and artists alike can execute flawless audiovisual projects at unprecedented speeds.