References Kim, Y.: Convolutional neural networks for sentence classification. arXiv preprint arXiv:1408.5882 (2014) Yang, Z., et al.: Hierarchical attention networks for document classification. In: Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 1480–1489 (2016) Devlin, J., Chang, M.-W., Lee, K., & Toutanova, K. Bert:

Multimodal artificial intelligence and online learning in youth mental health: a scoping review – npj Mental Health Research

Introduction

In 2022, the World Health Organization (WHO) reported that 1 in 12 children aged 0–9 years and 1 in 7 adolescents aged 10-19 years have a mental health problem1,2,3. In Canada, it was reported that 1 in 5 children and adolescents aged 4–17 years had a mental health problem, yet less than one-third appeared to have had contact with a mental health professional4. The situation worsened during and after the COVID-19 pandemic. While visits to emergency departments (ED) decreased at the onset of the pandemic, the proportion of hospitalizations for mental disorders increased. Additionally, physician-based consultations for mental health problems (which rapidly transitioned to virtual) increased disproportionately, particularly among adolescent females5,6. This increase in mental health service utilization coincided with a significant rise in the prevalence of mood and anxiety disorders among youth in 2022 compared to 2012, as reported by Statistics Canada7.

The use of AI in healthcare has been steadily increasing, with researchers continually developing new models for various healthcare applications8,9,10,11,12. Specifically, AI applications in mental health problems have led to significant advances in the analysis, detection, prediction, and treatment assistance for mental illnesses. These advancements leverage various data types, including physiological signals (e.g., Electroencephalography (EEG), Galvanic Skin Response (GSR), or voice), brain images, electronic health records (EHRs), psychological tests, social media platforms (e.g., Reddit), and monitoring systems (e.g., smartphones)13,14. In the context of YMH problems, data are often dynamic, heterogeneous, and continuously generated from multiple sources. This characteristic makes it difficult for static, offline models to remain accurate and representative over time. Therefore, online learning emerges as a natural complement to multimodal AI, as it enables models to be updated incrementally as new data become available, ensuring better adaptability to real-world and evolving conditions.

This scoping review aims to identify knowledge gaps in the existing literature on the use of multimodal AI models and online training in the detection and treatment of mental health-related problems in youth. Through a comprehensive analysis of key concepts and methodological approaches the review addresses the following research question:

What is the current status of research using online learning and multimodal AI models for diagnosis, monitoring and/or treatment of mental health problems in youth populations?

The novel contribution of this scoping review is the integration of online learning within the use of multimodal AI methods for YMH support, including research that employs AI for diagnosis, monitoring, and/or treatment of YMH problems. This broader inclusion enriches the subsequent discussion, which will primarily focus on methodologies based on online learning and multimodal AI.

The structure of this paper is as follows. Section “Methodology” outlines the methodology, including the search strategy and inclusion/exclusion criteria. Section “Results” summarizes the main findings across the identified studies. Section “Discussion” discusses these findings in relation to current trends, methodological challenges, and opportunities for future research. Section “Conclusion” concludes the review by highlighting key implications for the use of multimodal AI and online learning in youth mental health.

Background

AI is defined as the ability of machines to simulate human intelligence15,16. Within AI, machine learning (ML) refers to a system’s ability to learn and solve tasks related to a specific problem by generating a model through an automated process that analyzes a set of training data16,17,18. Deep learning (DL) is a subset of ML that relies on neural networks involving larger models in terms of layers and a greater number of parameters16,17,18. In this article, we adopt the convention of referring to classical machine learning algorithms (e.g., decision trees, support vector machines, ensemble methods, regression models) as ML, while the term DL is reserved for approaches based on neural networks, including deep neural architectures.

Multimodal AI refers to models that are trained with data of different types or sources (e.g., physiological signals, brain images, electronic health records, psychological tests, or data from social media and smartphones) with the goal of improving accuracy and generalization19. Multimodal approaches, in addition to facing typical unimodal challenges such as data homogenization, missing values, and difficulties in finding ground truth20, must also address additional challenges, including the selection of the type of data fusion (early, intermediate, or late), model interpretability, and the management of computational costs19,21.

In the context of multimodal AI applied to YMH problems, data streams are rarely static but instead arrive continuously from diverse sources. This makes it necessary to explore online learning as a complementary paradigm. To provide a clear understanding of the concept of online learning in this scoping review, we first introduce a general definition followed by key applications. Hoi et al. conducted an extensive review that effectively covers the concepts, general aspects, and modalities of online learning22 which we draw from. Online learning is a method that updates AI models using sequentially arriving data streams22,23, defined as massive and potentially unlimited sequences of data24. In contrast, with traditional AI models training is typically performed offline25,26 due to factors such as computational cost, the convenience of handling static data, consistency, and reproducibility. However, offline methods have limitations including low efficiency and poor scalability in large-scale problems, as updating the models often means retraining them from scratch. Online learning addresses these challenges by enabling the development of more efficient and scalable models by continuously incorporating diverse and representative data22, which is particularly valuable for applications where real-world data significantly differs from those collected under controlled conditions. Some applications of different online learning methods include online multi-task where different models perform tasks in parallel, typically consisting of a general model and specific ones, and then merge to produce a unified output. This approach has been applied in social network-based sentiment analysis27, spam email detection and even in predicting peptide interactions with specific protein complexes28. In these cases, the data sources, such as posts, emails, or molecular structures, allow models to be updated as new samples become available. Similarly, cost-sensitive approaches applied to large-scale simulated data have proven effective in detecting anomalies, such as cyber-attacks29 or malware30. Additionally, several architecture and parameter optimization strategies have been proposed to address the challenge of scalability in online learning models31,32,33

It is important to distinguish online learning from related paradigms like incremental learning or incremental retraining, often used interchangeably in the literature34. While, online learning involves continuous model updates from streaming data22,23, incremental learning refers more broadly to algorithms that update a model progressively as new training data become available, generating a sequence of models h1, h2, …, ht where each new model is constructed based on the previous one and a limited number of recent observations34,35.

Definition of youth population

The definition of the youth population was based on the standardization of age references proposed by Diaz et al.36, which facilitates the analysis of data across different life stages36. Following this criterion, we established the upper age limit at 25 years. Therefore, a study was considered to include a youth population if its dataset comprised participants aged up to 25 years or if the average age was below 25. In cases where the data consisted of texts from social networks, the statistical distribution of user ages across different platforms was analyzed based on the years of database publication. The analysis revealed that more than 60% of social media users are in the age range of 13–34 years, with the highest concentration between 13 and 24 years old37,38,39,40.

Understanding the youth population within this age range is essential, since YMH differs from adult mental health in several important ways that are relevant to this review. Adolescence and young adulthood (up to ~25 years) involve profound neurobiological, emotional, and social developments that increase vulnerability to mental health problems, with up to 75% of lifetime disorders initiating in this developmental window41,42,43. Youth also face unique risk environments—from peer dynamics to family context and adverse childhood experiences—that are less prevalent in adulthood44,45 Moreover, the transition from child and adolescent mental health services to adult services is often fragmented, leaving many young people unsupported during a critical period44. These factors impact not only the type of data available but also the design and applicability of AI models for detection, monitoring, and intervention in this population.

Methodology

To address the research question, a systematic search was conducted to identify relevant studies, select those aligned with key topics, extract data and relevant results, and report the findings. This scoping review followed the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines to enhance transparency and completeness in reporting, ensuring the reliability and validity of the presented information46,47,48.

Search strategy

A structured literature search was conducted in MEDLINE, IEEE Xplore, Compendex, and Inspec (via Engineering Village) during the period November 2024 to February 2025. This time frame refers to when the searches were carried out. The search was limited to articles published from January 2015 onwards. The starting year (2015) was selected to examine the evolution of research in this area over the past decade. The final search string was developed based on four core concepts: Youth Mental Health, Artificial Intelligence, Sensors, and Online Learning. Keywords related to each concept were combined using Boolean operators. The complete search string is provided below:

(“Youth Mental Health” OR “Medical services” OR “Human factors” OR “Mental Diseases” OR “Stress Detection” OR “Anxiety Detection” OR “Depression Detection” OR “Mental Disorders Detection” OR “Suicide detection” OR “Youth Health Care” OR “Youth Psychology” OR “Youth Safety”) AND (“Machine Learning” OR “Artificial Intelligence” OR “Deep learning” OR “Natural language processing” OR “Emotion recognition” OR “Predictive Models”) AND (“Sensors” OR “Soft sensors” OR “Monitoring” OR “Wearable sensors” OR “Biomedical monitoring” OR “Real-time systems” OR “Internet of things” OR “Social networking” OR “Mobile applications”) AND (“E-health” OR “Data stream” OR “Online methods” OR “Online learning” OR “Online classification” OR “Real-time Model Training” OR “AI Model Online training” OR “AI Model Update” OR “Online Learning Algorithms” OR “Real-time Model Adaptation” OR “Continuous Learning in AI” OR “Incremental Learning” OR “Machine Learning Online” OR “Adaptive AI Systems” OR “Online Neural Network Training” OR “Continuous Data Assimilation in AI” OR “Online Learning Systems”)

Search filters included: articles published in English from January 1, 2015, onwards. Additional search iterations with minor string adjustments were performed to ensure coverage. Modified strings are available in the supplementary material.

Study selection

The criteria I1-I4 and E1-E3 were defined to ensure consistency in the selection process. Domain refers to the thematic focus of the study, specifically whether it addresses mental health problems or applications of AI. Methodology indicates that AI methods must be explicitly implemented in the study. Data Types denotes that the study must use data suitable for training or evaluating AI models. Article Type specifies the kind of publications considered eligible. These criteria were chosen to maintain methodological rigor while ensuring that the studies included were directly relevant to the scope of this review.

The following inclusion and exclusion criteria were used to select relevant studies for the scoping review:

-

I1. Domain: Focused on AI applied to related mental health problems detection, monitoring, treatment or decision making.

-

I2. Methodology: Employs AI technique(s).

-

I3. Data Types: Data related to the generation of AI models.

-

I4. Article Type: Original research articles (not reviews or preprints).

-

E1. Domain: Unrelated to AI for mental health problems application.

-

E2. Data Types: Studies without data for modeling and/or analysis.

-

E3. Publication Type: Preprints and review articles.

Screening

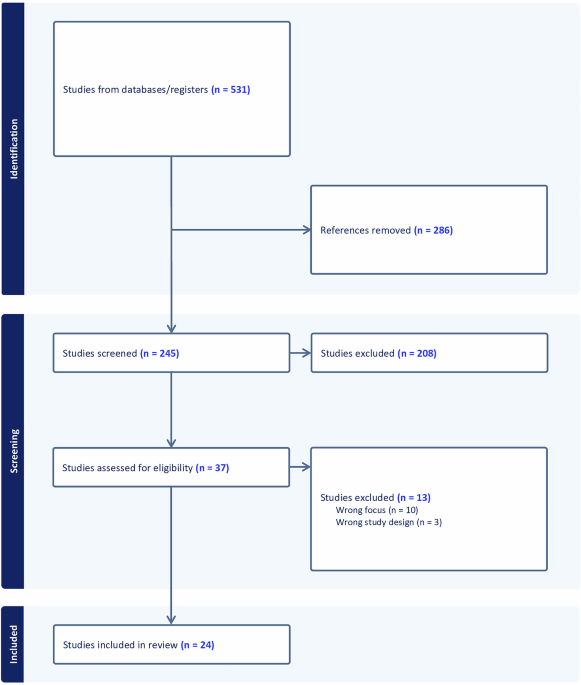

Study selection was conducted using Covidence systematic review software (Veritas Health Innovation, Melbourne, Australia; www.covidence.org). Titles and abstracts were screened independently by two reviewers according to predefined inclusion and exclusion criteria. Full-text screening followed the same methodology. Disagreements between the two reviewers were resolved through discussion in both stages. Figure 1 shows the PRISMA46,47,48 diagram where all the data of each step are illustrated.

The alternative text for this image may have been generated using AI.

Full size image

Study selection and exclusion diagram according to the PRISMA guideline.

Final selection

The initial database search yielded a total of 531 articles, of which 286 were identified as duplicates and removed, leaving 245. From title and abstract screening 37 of 245 were included. Following full-text review, 24 studies met the inclusion criteria and were included for data extraction.

Data extraction

The extracted data from the selected articles included: type of publication (journal or conference), study objective, databases used, data types, presence of a multimodal approach, implementation of online learning, population type with age range, mental health problem, type of AI algorithm, models, and results.

Results

Distribution of key topics

Among the total number of selected studies, five applied online learning and unimodal/multimodal AI methods to YMH problems. Another five studies used multimodal AI but did not implement online learning. While an additional five relied solely on unimodal AI without online learning. The remaining studies met the inclusion criteria; however, they lacked information on participants’ age ranges. Figure 2 illustrates the distribution of key topics covered by the accepted studies.

The alternative text for this image may have been generated using AI.

Full size image

Representation of the main categories addressed in the analyzed studies (2015–2024), including unimodal AI applications for general mental health (MH + AI) or YMH problems (YMH + AI), multimodal approaches for YMH (YMH + AI + Multimodal), and AI approaches combined with online learning strategies (YMH + AI + Online Learning).

Figure 3 shows the distribution of the 19 studies that employed AI methods to advance research on YMH problems within the scoping review time frame.

The alternative text for this image may have been generated using AI.

Full size image

Number of publications for AI in YMH from 2015 to 2024, with the red line representing the temporal trend in publication frequency.

YMH problems

Figure 4 shows the six YMH problems that were addressed in the reviewed works. Some of the articles that detected stress also added another problem, such as cognitive performance, emotions, or panic. In addition, cyberbullying was included because of its close relationship with depression, anxiety, and social problems49.

The alternative text for this image may have been generated using AI.

Full size image

The main areas addressed in the selected studies from 2015 to 2024 include cyberbullying, depression, cognitive performance, emotions, panic, and stress.

Data types and AI models

Most unimodal approaches used text as input data, while studies implementing online learning and multimodal AI incorporated physiological signals along with sensor-based data, such as body temperature, geolocation (GPS), or other smartphone-based activity indicators. Additionally, videos and images were utilized in multimodal approaches that did not employ online learning. Figure 5 presents the number of articles that reported the use of these data types.

The alternative text for this image may have been generated using AI.

Full size image

Distribution of data types used in the analyzed studies from 2015 to 2024, including images/videos, sensor-based data, physiological signals, and text.

Figure 6 illustrates the distribution of AI techniques used in the reviewed works. Approximately 60% of them applied ML techniques, either alone or in combination with DL. Notably, DL was predominant in studies that incorporated both online learning and multimodal AI.

The alternative text for this image may have been generated using AI.

Full size image

Distribution of the algorithms used in the analyzed studies from 2015 to 2024, differentiating between ML, DL, and combined approaches.

Summary of extracted data

Beyond the distribution of data types and AI models, Tables 1 and 2 provide a comprehensive overview of study characteristics and methodological details. The information was divided into two groups: overview of studies and their key characteristics and specific information related to the AI methods used in the analyzed articles. Classification results are reported in F1-score, and regression results are reported in RMSE.

Full size table

Full size table

Discussion

YMH is a key public health concern with long-term societal implications. A growing number of studies are leveraging advances in AI/ML to improve the prevention, diagnosis, and treatment of mental health disorders in the general population. Similarly, research on YMH has started to follow this trend, emphasizing the need for targeted interventions. Therefore, increasing research efforts focused on this population and implementing key findings is essential. The present scoping review provides an overview of current methodologies applied to YMH with emphasis on emerging trends in multimodal approaches, given the heterogeneity of data sources involved (e.g., physiological signals, clinical records, social media, and self-reports), and online learning techniques, due to the continuous and dynamic nature of these data streams. Together, these dimensions define a promising yet under-explored intersection with considerable potential for advancing the diagnosis, monitoring, and treatment of YMH problems.

Despite this promise, the number of studies directly addressing YMH with AI remains limited. This observation should be interpreted within the broader context of the field, where research on AI in mental health more generally has expanded substantially over the past decade, encompassing hundreds of studies across detection, diagnosis, and intervention. Yet, the specific subset focusing on youth populations and particularly those employing multimodal methods or online learning remains relatively small. This gap underscores that youth-focused applications of AI represent not only an emerging but also a largely underdeveloped research niche within the larger landscape of AI and mental health, highlighting a critical opportunity for future investigation.

Overview of key topics

A total of 19 studies directly addressing YMH problems were identified, with the majority published between 2022 and 2024. The highest number appeared in 2024, particularly in the “YMH + AI” and “YMH + AI + Online Learning” categories (Fig. 2 and Table 1). Although publication counts across the four topics—”YMH + AI + Online Learning”, “YMH + AI + Multimodal”, “YMH + AI” and “MH + AI”—were comparable, a temporal trend indicates increasing incorporation of online learning methods in YMH research. In contrast, despite the increasing use of multimodal data in broader AI applications, no clear preference for unimodal or multimodal was observed in the reviewed YMH studies (Fig. 3 and Table 1).

Multimodal online learning

Although limited in number, a few studies have examined the use of online learning combined with multimodal AI in YMH problems. Andreas et al. applied an online transfer learning approach using CNN to classify stress based on the WESAD dataset, which includes physiological signals such as ECG and EDA50,51. Two years later, they introduced an updated version of their model, evaluated on both the SWELL-KW multimodal dataset that includes physiological data from office workers performing document editing tasks under varying levels of stress52 and WESAD. The updated model demonstrated improved robustness and performance53. These studies highlight the need to distinguish between homogeneous domains—where source and target data share similar feature spaces—and heterogeneous domains, which involve differences in modality, task, or distribution54. In heterogeneous settings, effective online transfer learning requires identifying and aligning common and domain-specific attributes53. Furthermore, both studies address concept drift in online learning, referring to temporal changes in the joint data distribution that can negatively affect model performance over time55,56.

Luo et al. proposed a branched deep learning model based on hierarchical multitask learning for concurrent stress classification and dynamic clustering57. The model was evaluated using the Dartmouth StudentLife dataset—comprising smartphone and wearable sensor data, along with psychological surveys from 83 students over two academic terms58—and WESAD. Two architectures were tested: CALM-Net (using LSTM) and CATran-Net (using Transformers). Both incorporated what the authors describe as an online learning setting to enable continuous model updates. However, while the method demonstrates model adaptation through incremental learning and subject-specific parameterization, it does not explicitly address other key aspects typically associated with online learning, such as continuous updates from a real-time data stream or concept drift detection. In addition, a branched extension to dynamic clustering was introduced, assigning new users to groups with similar stress patterns. This aims to address the cold start problem defined as the challenge of making predictions for unseen users with limited data59. Branched CALM-Net achieved F1-scores of 0.883 ± 0.023 (first week) and 0.912 ± 0.013 (up to second week) in StudentLife, and 0.960 ± 0.061 using 60% of WESAD. These results showed comparable performance to models trained on the full StudentLife dataset60,61.

Unimodal online Learning

Sah et al. proposed one of the earliest approaches resembling online learning in unimodal YMH by leveraging user-specific EDA signals from the WESAD dataset to develop personalized stress detection models62. Their framework first trains a general CNN model and subsequently adapts it to individual users through incremental learning with user-specific data. Although the authors describe this process as online learning, the approach focuses on model personalization rather than continuous updates from a real-time data stream.

Moontaha et al. applied online learning to EEG-based emotion recognition by incrementally updating models during real-time EEG signal acquisition63. These models were initially trained offline using the AMIGOS dataset64 and an additional author’s dataset. The results indicate that online learning enhances classification performance compared to models trained exclusively offline, achieving F1-score improvements of 23.9% for arousal and 25.8% for valence over previous ref. 63.

Together, the reviewed multimodal and unimodal studies applying online learning in YMH report improved performance compared to static (offline) models, particularly in emotion classification tasks. For example, Moontaha et al. reported improvements of up to 23.9% and 25.8% in arousal and valence detection using real-time EEG signals63. These results highlight the effectiveness of online learning for real-time adaptation in multimodal and unimodal settings. However, it should be noted that not all studies implement true streaming online learning; several rely on incremental learning or data evaluated offline rather than continuous real-time model updates. Notably, all identified applications focused exclusively on classification tasks; no regression-based study incorporated online learning (Table 1).

YMH problems

The detection of stress, emotions, and depression were the most explored areas. All studies that addressed multiple problems included stress, possibly due to its strong association with other mental health indicators (Fig. 4 and Table2)65,66. Additionally, all studies that incorporated online learning, as well as those categorized under the “MH + AI” topic, focused on stress and/or emotion detection. A similar trend was observed in studies employing purely multimodal approaches, with the exception of ref. 67, which focused on depression detection using audio and text data. The unimodal approaches applied to YMH were the most diverse, covering depression, cognitive performance, stress, emotions, and cyberbullying62,63,68,69,70,71,72,73,74,75,76,77,78,79, as detailed in Table 2.

Figure 4 further illustrates how current research is concentrated on symptom-level or at-risk states, with stress, emotions, and cognitive performance representing the majority of targets, while only a smaller number of studies address depression, which is more closely aligned with a recognized clinical condition.

This imbalance suggests that AI applications in YMH problems are predominantly oriented toward the detection of early symptoms rather than the diagnosis or intervention for well-defined clinical disorders.

Data types

Physiological signals, videos, and images

Half of the analyzed articles utilized physiological signals, with cardiac signals being the most frequently used51,53,73,80,81,82, followed by electrodermal activity51,53,62,82, brain signals63,72,77, voice57,67,81, and eye-tracking71. Additionally, videos and images were employed for facial expression recognition83 and the analysis of handwritten text produced by students84.

Text, sensor-based data, and questionnaires

Text data appeared in multiple formats, including questionnaires, social media posts, surveys, and interview transcripts67,69,70,74,75,76,78,79,84,85. Approximately one-fourth of the studies incorporated sensor-based data, capturing parameters such as GPS location, temperature, heart rate (via infrared sensors), sleep patterns, and smartphone usage51,53,57,62,73,81 as detailed in Fig. 5 and Table 2.

3D skeletal joints

Notably, one study proposed an innovative approach using 3D skeletal joint data, captured through Kinect cameras (Microsoft Corp., Redmond, WA, USA), to analyze participants’ gait for depression detection68.

AI models

Most studies employed ML models, primarily in unimodal applications without online learning. The most commonly used models included SVM, RF, DT, and GB-based models63,68,71,72,73,74,75,76,77,79,80,81,85. In contrast, seven studies implemented deep learning (DL) models, most of which incorporated online learning. In these cases, CNNs and LSTMs were the most frequently used51,53,57,62,69,78,83. Additionally, two studies combined both ML and DL approaches70,82 (Fig. 6 and Table 2). Finally, the model categorized as “Other” in Fig. 6 corresponds to the study by Roy et al. who proposed a lexical analysis-based model for sentiment detection using student handwriting images and social media text84.

Validation and evaluation practices

Across the reviewed studies, most rely on offline validation strategies, primarily cross-validation67,71,75,80,81 or leave-one-out (LOO) validation68,72,82, performed on previously collected datasets. While these approaches are useful for estimating model performance, they do not necessarily reflect real-world deployment conditions, where data arrive sequentially and distributions may change over time. Only a limited number of works explicitly simulate online scenarios through progressive or streaming validation schemes51,53,57,62, and even fewer evaluate their models in real-world environments63,73. This gap between offline evaluation and real-world deployment makes it difficult to assess the practical robustness and longitudinal stability of the proposed methods.

Similarly, external validation across independent datasets remains uncommon. Although a few studies evaluate models on more than one dataset51,53,57,63,67,73, the majority of the reviewed works rely on a single dataset68,69,70,71,74,76,77,78,79,80,81,82,83,84,85. This increases the risk of overfitting to dataset-specific characteristics such as sensor configuration, experimental protocol, or population demographics. Additionally, participant samples are often small and demographically homogeneous, with many datasets composed mainly of college, university, or graduate students within relatively narrow age ranges. Such population bias restricts the applicability of the reported results to broader or more diverse youth populations.

Ethical and practical challenges

The deployment of AI systems in YMH contexts also raises important practical and ethical challenges. Unlike static models, adaptive systems continuously update their parameters based on incoming data, which introduces additional concerns regarding data governance, transparency, and system oversight86. In youth populations, data governance is particularly sensitive because longitudinal behavioral and physiological data are often collected from minors87,88. This requires robust consent and assent procedures, clear policies for data retention and secondary use, and mechanisms allowing participants or guardians to withdraw data from ongoing model updates.

Trustworthy is another critical issue. Because adaptive models evolve over time, the reasoning behind predictions may change as the model learns from new data. This dynamic behavior can make it difficult for clinicians to understand model outputs or maintain trust in the system89. Providing interpretable explanations, clear documentation, and regular auditing of model behavior is therefore essential.

Future studies and trends

Although the reviewed literature demonstrates promising progress in applying AI to YMH problems, several gaps remain open for future research. First, most existing studies target symptom-level or at-risk state indicators, while fewer address well-defined clinical conditions. Future work should broaden the typology of mental health problems considered, integrating under-explored conditions, thereby enabling more comprehensive clinical applications.

Second, methodological diversity remains limited. A substantial proportion of studies rely on unimodal data, whereas multimodal approaches could improve robustness and generalizability. The scarcity of demographic details also highlights the need for larger and more inclusive datasets that reflect age, gender, and cultural differences within youth populations.

Third, longitudinal and intervention-focused studies are largely absent. Current evidence is dominated by detection tasks, but advancing toward diagnosis, prognosis, and intervention design will require datasets and models capable of capturing changes over time. This progression is critical if AI is to support not only early identification but also the personalization of interventions for young people. Therefore, future research should prioritize systematic cross-dataset validation as well as longitudinal and real-world evaluations to better assess the generalizability and translational potential of machine learning models.

Finally, the rapid evolution of large language models (LLMs) and generative AI tools, such as ChatGPT, opens a novel research direction. These technologies could enable scalable, conversational support systems, assist clinicians in triage, or provide adaptive learning resources tailored to youth needs. However, rigorous evaluation of their ethical, clinical, and developmental implications will be essential to ensure safe and equitable implementation.

Limitations of the scoping review

The studies included in this scoping review were carefully selected following a rigorous screening and review process and adhering to PRISMA guidelines. However, some relevant papers on YMH may have been excluded if they were not indexed in the selected databases or if their terminology did not match the search strings used. Additionally, due to the limited number of studies incorporating online learning, we broadened the scope by also including articles directly related to YMH, even if they did not implement online learning, in order to provide a more comprehensive overview. Another challenge was the lack of demographic information in certain datasets, which limited our ability to verify whether a given study specifically targeted youth populations. Finally, a limitation lies in the remaining difficulty in differentiating mental health problems from neurological conditions, such as viral or bacterial infections, aneurysms, arteriovenous malformations, parasitic infections, and brain tumors that affect the frontal cortex and may lead to mental health symptoms.

Conclusion

This scoping review examined AI methodologies applied to YMH, with a focus on the integration of online learning and multimodal approaches for diagnosis, monitoring, and intervention. The findings suggest that research in this domain is still emerging, highlighting both the need and opportunity for further investigation. Several challenges must be addressed for these approaches to evolve into viable clinical or real-world tools. These include the availability and long-term validity of multimodal data from youth populations, the lack of demographic information in certain datasets, variability in data collection contexts (homogeneous vs. heterogeneous), cold start problem, concept drift, ethical and logistical concerns associated with studying minors, and the high computational demands of training robust AI models.

The reviewed studies represent preliminary efforts to address these challenges, and encouragingly, the volume of research in this area is growing. Notably, the use of online learning has demonstrated improvements in model adaptability and performance features that are essential for developing translational AI models suitable for real-world deployment. DL methods were predominantly employed in multimodal and online learning settings, whereas ML approaches remain largely unexplored in this context.

Physiological signals and sensor-based data were the most commonly used modalities in multimodal and online learning studies, reflecting the current data availability and infrastructure. This highlights the urgent need to develop standardized data collection protocols and openly accessible datasets tailored to youth populations. Also, taken together, our findings suggest that AI research in YMH problems remains on symptom-level or at-risk state, with relatively fewer studies focusing on clinically diagnosable disorders such as depression. Future research should broaden this scope, incorporating a wider range of conditions and explicitly addressing diagnostic and intervention outcomes, to fully realize the potential of AI in supporting YMH.

Data availability

No datasets were generated or analyzed during the current study.

References

-

Organization, W. H. World mental health report: Transforming mental health for all https://www.who.int/publications/i/item/9789240049338 (2022).

-

Organization, W. H. Improving the mental and brain health of children and adolescents https://www.who.int/activities/improving-the-mental-and-brain-health-of-children-and-adolescents (2024).

-

Organization, W. H. Mental health of adolescents https://www.who.int/news-room/fact-sheets/detail/adolescent-mental-health (2024).

-

Georgiades, K. et al. Six-month prevalence of mental disorders and service contacts among children and youth in Ontario: evidence from the 2014 Ontario Child Health Study. Can. J. Psychiatry 64, 246–255 (2019).

Article PubMed PubMed Central Google Scholar

-

Information, C. I. f. H. Children and youth mental health in Canada (2020). From https://www.cihi.ca/en/children-and-youth-mental-health-in-canada

-

Saunders, N. R. et al. Utilization of physician-based mental health care services among children and adolescents before and during the covid-19 pandemic in ontario, canada. JAMA Pediatr. 176, e216298–e216298 (2022).

Article PubMed PubMed Central Google Scholar

-

Stephenson, E. Mental disorders and access to mental health care https://www150.statcan.gc.ca/n1/pub/75-006-x/2023001/article/00011-eng.htm (2023).

-

Alowais, S. A. et al. Revolutionizing healthcare: the role of artificial intelligence in clinical practice. BMC Med. Educ. 23, 689 (2023).

Article PubMed PubMed Central Google Scholar

-

Bajwa, J. et al. Artificial intelligence in healthcare: transforming the practice of medicine. Future Healthc. J. 8, e188–e194 (2021).

Article PubMed PubMed Central Google Scholar

-

Yu, K.-H., Beam, A. L. & Kohane, I. S. Artificial intelligence in healthcare. Nat. Biomed. Eng. 2, 719–731 (2018).

Article PubMed Google Scholar

-

Rajpurkar, P., Chen, E., Banerjee, O. & Topol, E. J. Ai in health and medicine. Nat. Med. 28, 31–38 (2022).

Article CAS PubMed Google Scholar

-

Ravì, D. et al. Deep learning for health informatics. IEEE J. Biomed. Health Inform. 21, 4–21 (2016).

Article PubMed Google Scholar

-

Graham, S. et al. Artificial intelligence for mental health and mental illnesses: an overview. Curr. Psychiatry Rep. 21, 116 (2019).

Article PubMed PubMed Central Google Scholar

-

Lee, E. E. et al. Artificial intelligence for mental health care: clinical applications, barriers, facilitators, and artificial wisdom. Biol. Psychiatry Cogn. Neurosci. Neuroimaging 6, 856–864 (2021).

PubMed PubMed Central Google Scholar

-

Du-Harpur, X. et al. What is AI? Applications of artificial intelligence to dermatology. Br. J. Dermatol. 183, 423–430 (2020).

Article CAS PubMed PubMed Central Google Scholar

-

Janiesch, C. et al. Machine learning and deep learning. Electron. Mark. 31, 685–695 (2021).

Article Google Scholar

-

Bishop, C. M. & Nasrabadi, N. M.Pattern Recognition and Machine Learning (Springer, 2006).

-

Shinde, P. P. & Shah, S. A review of machine learning and deep learning applications. In Proc. 2018 Fourth International Conference on Computing Communication Control and Automation (ICCUBEA) (IEEE, 2018).

-

Acosta, J. N. et al. Multimodal biomedical AI. Nat. Med. 28, 1773–1784 (2022).

Article CAS PubMed Google Scholar

-

Wang, F. & Preininger, A. Ai in health: state of the art, challenges, and future directions. Yearb. Med. Inform. 28, 016–026 (2019).

Article Google Scholar

-

Lipkova, J. et al. Artificial intelligence for multimodal data integration in oncology. Cancer cell 40, 1095–1110 (2022).

Article CAS PubMed PubMed Central Google Scholar

-

Hoi, S. C. et al. Online learning: a comprehensive survey. Neurocomputing 459, 249–289 (2021).

Article Google Scholar

-

Sahoo, D. et al. Online deep learning: learning deep neural networks on the fly. In Proc. 27th International Joint Conference on Artificial Intelligence (AAAI Press, 2018).

-

Silva, J. A. et al. Data stream clustering: a survey. ACM Comput. Surv. (CSUR) 46, 1–31 (2013).

Article Google Scholar

-

Brownlee, J. Machine learning algorithms from scratch. https://machinelearningmastery.com (2019).

-

Chollet, F. Deep Learning with Python (Manning Publications, 2021).

-

Li, G. et al. Micro-blogging sentiment detection by collaborative online learning. In Proc. 2010 IEEE International Conference on Data Mining (IEEE, 2010).

-

Li, G. et al. Collaborative online multitask learning. IEEE Trans. Knowl. Data Eng. 26, 1866–1876 (2013).

Article Google Scholar

-

Li, G. et al. Detecting cyberattacks in industrial control systems using online learning algorithms. Neurocomputing 364, 338–348 (2019).

Article Google Scholar

-

Xu, K. et al. Droidevolver: self-evolving android malware detection system. In Proc. 2019 IEEE European Symposium on Security and Privacy (EuroS&P) (IEEE, 2019).

-

Hong, R. & Chandra, A. DLion: decentralized distributed deep learning in micro-clouds. In Proc. 30th International Symposium on High-Performance Parallel and Distributed Computing (ACM, 2021).

-

Ktena, S. I. et al. Addressing delayed feedback for continuous training with neural networks in ctr prediction. In Proc. 13th ACM Conference On Recommender Systems (ACM, 2019).

-

Yang, Y. et al. Adaptive deep models for incremental learning: Considering capacity scalability and sustainability. In Proc. 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining (ACM, 2019).

-

Losing, V., Hammer, B. & Wersing, H. Incremental on-line learning: aA review and comparison of state of the art algorithms. Neurocomputing 275, 1261–1274 (2018).

Article Google Scholar

-

Ramanath, R. et al. Lambda learner: fast incremental learning on data streams. In Proc. 27th ACM SIGKDD Conference on Knowledge Discovery & Data Mining, 3492–3502 (ACM, 2021).

-

Diaz, T. et al. A call for standardized age-disaggregated health data. Lancet Healthy Longev. 2, e436–e43 (2021).

-

DataReportal. Digital 2017: Bizarre surprises in facebook audience data https://datareportal.com/reports/digital-2017-bizarre-surprises-in-facebook-audience-data (2017).

-

DataReportal. Digital 2018: Reddit overtakes twitter https://datareportal.com/reports/digital-2018-reddit-overtakes-twitter (2018).

-

DataReportal. Digital 2021: October global statshot https://datareportal.com/reports/digital-2021-october-global-statshot (2021).

-

DataReportal. Digital 2023: October global statshot https://datareportal.com/reports/digital-2023-october-global-statshot (2023).

-

Fusar-Poli, P. Integrated mental health services for the developmental period (0 to 25 years): a critical review of the evidence. Front. Psychiatry 10, 355 (2019).

Article PubMed PubMed Central Google Scholar

-

Singleton, L. Developmental differences and their clinical impact in adolescents. Br. J. Nurs. 16, 140–143 (2007).

Article PubMed Google Scholar

-

Lin, J. & Guo, W. The research on risk factors for adolescents’ mental health. Behav. Sci. 14, 263 (2024).

Article PubMed PubMed Central Google Scholar

-

Singh, S. P. & Tuomainen, H. Transition from child to adult mental health services: needs, barriers, experiences and new models of care. World Psychiatry 14, 358 (2015).

Article PubMed PubMed Central Google Scholar

-

Merikangas, K. R., Nakamura, E. F. & Kessler, R. C. Epidemiology of mental disorders in children and adolescents. Dialogues Clin. Neurosci. 11, 7–20 (2009).

Article PubMed PubMed Central Google Scholar

-

Liberati, A. et al. The prisma statement for reporting systematic reviews and meta-analyses of studies that evaluate healthcare interventions: explanation and elaboration. Bmj 339, e1000100 (2009).

-

Tricco, A. C. et al. Prisma extension for scoping reviews (prisma-scr): checklist and explanation. Ann. Intern. Med. 169, 467–473 (2018).

Article PubMed Google Scholar

-

Page, M. J. et al. The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. Bmj 372, n71 (2021).

-

Campbell, M. & Bauman, S. Cyberbullying: definition, consequences, prevalence. In Reducing Cyberbullying in Schools 3–16 (Elsevier, 2018).

-

Schmidt, P., Reiss, A., Duerichen, R., Marberger, C. & Van Laerhoven, K. Introducing wesad, a multimodal dataset for wearable stress and affect detection. In Proc. 20th ACM International Conference on Multimodal Interaction 400–408 (ACM, 2018).

-

Andreas, A. et al. CNN-based emotional stress classification using smart learning dataset. In Proc. 2022 IEEE International Conferences on Internet of Things (iThings), GreenCom, CPSCom, SmartData, and Cybermatics (IEEE, 2022).

-

Koldijk, S., Sappelli, M., Verberne, S., Neerincx, M. A. & Kraaij, W. The swell knowledge work dataset for stress and user modeling research. In Proc. 16th International Conference on Multimodal Interaction 291–298 (ACM, 2014).

-

Andreas, A., Mavromoustakis, C. X., Song, H. & Batalla, J. M. Optimisation of cnn through transferable online knowledge for stress and sentiment classification. IEEE Trans. Consum. Electron. 70, 3088–3097 (2023).

Article Google Scholar

-

Zhuang, F. et al. A comprehensive survey on transfer learning. Proc. IEEE 109, 43–76 (2020).

Article Google Scholar

-

Lu, J. et al. Learning under concept drift: a review. IEEE Trans. Knowl. Data Eng. 31, 2346–2363 (2018).

Google Scholar

-

Gama, J., Žliobaitė, I., Bifet, A., Pechenizkiy, M. & Bouchachia, A. A survey on concept drift adaptation. ACM Comput. Surv. 46, 1–37 (2014).

Article Google Scholar

-

Luo, Y. et al. Dynamic clustering via branched deep learning enhances personalization of stress prediction from mobile sensor data. Sci. Rep. 14, 6631 (2024).

Article CAS PubMed PubMed Central Google Scholar

-

Wang, R. et al. Tracking depression dynamics in college students using mobile phone and wearable sensing. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2, 1–26 (2018).

Google Scholar

-

Lika, B., Kolomvatsos, K. & Hadjiefthymiades, S. Facing the cold start problem in recommender systems. Expert Syst. Appl. 41, 2065–2073 (2014).

Article Google Scholar

-

Mikelsons, G., Smith, M., Mehrotra, A. & Musolesi, M. Towards deep learning models for psychological state prediction using smartphone data: challenges and opportunities. Preprint at https://doi.org/10.48550/arXiv.1711.06350 (2017).

-

Adler, D. A., Wang, F., Mohr, D. C. & Choudhury, T. Machine learning for passive mental health symptom prediction: Generalization across different longitudinal mobile sensing studies. Plos ONE 17, e0266516 (2022).

Article CAS PubMed PubMed Central Google Scholar

-

Sah, R. K. et al. Stressalyzer: Convolutional neural network framework for personalized stress classification. In Proc. 2022 44th Annual International Conference of the IEEE Engineering in Medicine & Biology Society (EMBC) (IEEE, 2022).

-

Moontaha, S., Schumann, F. E. F. & Arnrich, B. Online learning for wearable eeg-based emotion classification. Sensors 23, 2387 (2023).

Article PubMed PubMed Central Google Scholar

-

Miranda-Correa, J. A., Abadi, M. K., Sebe, N. & Patras, I. Amigos: a dataset for affect, personality and mood research on individuals and groups. IEEE Trans. Affect. Comput. 12, 479–493 (2018).

Article Google Scholar

-

Pervanidou, P. & Chrousos, G. P. Metabolic consequences of stress during childhood and adolescence. Metabolism 61, 611–619 (2012).

Article CAS PubMed Google Scholar

-

Quick, J. D., Horn, R. S. & Quick, J. C. Health consequences of stress. In Job Stress, 19–36 (Routledge, 2014).

-

Iyortsuun, N. K. et al. Additive cross-modal attention network (acma) for depression detection based on audio and textual features. IEEE Access 12, 20479–20489 (2024).

Article Google Scholar

-

Lu, H. et al. A new skeletal representation based on gait for depression detection. In Proc. 2020 IEEE International Conference on E-health Networking, Application & Services (HEALTHCOM) (IEEE, 2021).

-

Mundra, A. et al. Enhancing mental health care: a proposed approach for depression detection using CNN and LSTM model. In Proc. 2023 Global Conference on Information Technologies and Communications (GCITC) (IEEE, 2023).

-

Krishna, N. B. et al. Exploring machine learning algorithms for the detection of depression. In Proc. 2024 International Conference on Smart Systems for Applications in Electrical Sciences (ICSSES) (IEEE, 2024).

-

Xia, Z. et al. Mental states and cognitive performance monitoring for user-centered e-learning system: a case study. In Transdisciplinarity and the Future of Engineering (SAGE Publications, 2022).

-

Li, Z. et al. A novel method of cognitive overload assessment based on a fusion feature selection using EEG signals. J. Neural Eng. 21, 066047 (2024).

Article Google Scholar

-

Benchekroun, M. et al. Cross dataset analysis for generalizability of HRV-based stress detection models. Sensors 23, 1807 (2023).

Article PubMed PubMed Central Google Scholar

-

Bisht, A. et al. Stress prediction in indian school students using machine learning. In Proc. 2022 3rd International Conference on Intelligent Engineering and Management (ICIEM) (2022).

-

Hasan, M., Rundensteiner, E. & Agu, E. Automatic emotion detection in text streams by analyzing twitter data. Int. J. Data Sci. Anal. 7, 35–51 (2019).

Article Google Scholar

-

Khan, M. R. H., Afroz, U. S., Masum, A. K. M., Abujar, S. & Hossain, S. A. Sentiment analysis from bengali depression dataset using machine learning. In Proc. 2020 11th International Conference on Computing, Communication, and Networking Technologies (ICCCNT), 1–5 (IEEE, 2020).

-

Athithan, S. et al. Emotion detection using eeg signals with ensemble learning. In Proc. 2022 2nd International Conference on Technological Advancements in Computational Sciences (ICTACS) (IEEE, 2022).

-

Doctor, F., Karyotis, C., Iqbal, R. & James, A. An intelligent framework for emotion aware e-healthcare support systems. In Proc. 2016 IEEE Symposium Series on Computational Intelligence (SSCI) 1–8 (IEEE, 2016).

-

Kumar, K. M. K. et al. Detection of bullying text: A multi-faceted approach using machine learning and natural language processing. In Proc. 2024 5th International Conference on Smart Electronics and Communication (ICOSEC) 180–186 (IEEE, 2024).

-

Talaat, F. M. & El-Balka, R. M. Stress monitoring using wearable sensors: Iot techniques in medical field. Neural Comput. Appl. 35, 18571–18584 (2023).

Article Google Scholar

-

Sandulescu, V. & Dobrescu, R. Wearable system for stress monitoring of firefighters in special missions. In Proc. 2015 E-Health and Bioengineering Conference (EHB) (IEEE, 2015).

-

Di Martino, F. & Delmastro, F. High-resolution physiological stress prediction models based on ensemble learning and recurrent neural networks. In Proc. 2020 IEEE Symposium on Computers and Communications (ISCC) (IEEE, 2020).

-

Gowda, A. G. A. et al. Monitoring and alerting panic situations in students using artificial intelligence. In Proc. 2022 IEEE 5th Eurasian Conference on Educational Innovation (ECEI) (IEEE, 2022).

-

Roy, C. et al. Emotion predictor using social media text and graphology. In Proc. 2019 IEEE 9th International Conference on Advanced Computing (IACC) (IEEE, 2019).

-

Kumar Jha, M. et al. An efficient machine learning classification with feature selection techniques for depression detection from social media. In Proc. 2023 International Conference on Communication, Security and Artificial Intelligence (ICCSAI) (IEEE, 2023).

-

Janssen, M., Brous, P., Estevez, E., Barbosa, L. S. & Janowski, T. Data governance: organizing data for trustworthy artificial intelligence. Gov. Inf. Q. 37, 101493 (2020).

Article Google Scholar

-

Monah, S. R. et al. Data governance functions to support responsible data stewardship in pediatric radiology research studies using artificial intelligence. Pediatr. Radiol. 52, 2111–2119 (2022).

Article PubMed Google Scholar

-

Van der Hof, S. I agree, or do I: a rights-based analysis of the law on children’s consent in the digital world. Wis. Int’l LJ 34, 409 (2016).

Google Scholar

-

Kondylakis, H. et al. A review of methods for trustworthy AI in medical imaging: the future-ai guidelines. IEEE J. Biomed. Health Informatics (2025).

Download references

Acknowledgements

The research team would like to acknowledge all individuals who took part in this study, thank you for making this possible. This work was supported by the National Sciences and Engineering Research Council of Canada (NSERC) through NSERC – RGPIN-2022-05438, as well as funded by Hamilton Heath Sciences Research Strategic Initiatives. The funders had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript; or in the decision to publish the results.

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

Reprints and permissions

About this article

Cite this article

Ramirez Campos, M.S., Barati, K., Samavi, R. et al. Multimodal artificial intelligence and online learning in youth mental health: a scoping review. npj Mental Health Res 5, 26 (2026). https://doi.org/10.1038/s44184-026-00207-4

Download citation

-

Received:

-

Accepted:

-

Published:

-

Version of record:

-

DOI: https://doi.org/10.1038/s44184-026-00207-4